Hi,

I have a newly arrived Astra direct from Orbbec. It is the third Astra device I am using with Isadora. However, this new device does not appear recognised by the OpenNITracker on Windows or Mac. The two previously purchased devices appear immediately and function great on Windows and Mac Isadora 3 patches (with Mac Isadora set to Rosetta).

To eliminate the issue of the device being faulty, I connected it to an Orbbec TOP (released Feb) in a Mac version of a TouchDesigner project, confirming that the device functions as expected with RGB, IR, Pointcloud, and Depth outputs accessible.

Therefore, a device firmware change may be the issue with compatibility in Isadora 3 Windows and Mac. As Orbbec are not overly transparent or makes working with firmware easy or accessible for non-programmers, I am now stuck with moving forward with this device in Isadora projects.

@mark Also, please note that Orbbec appears to have released new Mac SDKs last fortnight.

I am aware of these previous forum threads related to the use of Orbbec on Windows and Mac:

https://community.troikatronix...

https://community.troikatronix...

Any assistance with this new Orbbec Astra functioning with OpenNi on Mac in Isadora would be greatly appreciated.

Best Wishes

Russell

Hi,

I hope you are well and in good spirits. Of course, you can use Isadora for a similar style of interactive display. In your example by the Japanese artist there is the appearance of a lot of particles in a thick patterning. Consequently, considerations around patch efficiency will likely be critical. Alternatively, consider a layered approach, for example, recording a particle scene to video and then compositing this video behind your interactive particles in a new scene. In this way you increase the visual quantity of particles but the real-time interactivity is optimised to a top image layer. You can also do calculations to determine the maximum number of particles that can be present without affecting your frame rate. Use the ‘Performance’ watcher module to help make the calculations based on what is going into your particle system's frequency and life span inputs. You may have noticed that the number of particles input is not dynamic, and resetting this will kill all currently active instances. So, it is a setting that needs to be calculated and set at the start.

Regarding your primary question, numerous exciting and dynamic ways exist to determine and control the spatial placement of your particles in the 3D viewport of your scene. This flexibility allows for creative experimentation and can enhance the interactivity of your display. Here are some examples I have shared with the Isadora User Group on Facebook:

https://m.facebook.com/video.p...

here is a demonstration patch for that: demo-particles-04.zip

https://m.facebook.com/video.php/?video_id=2138613486192707

These two examples use an external source for the x, y, and z positioning data for 1: a grid and 2: a sphere. These data sets were generated using Meshlab software (open source and free). Alternatively, the distribution of particles can be randomly generated by wave generator modules set to random. You will want to spread the particles in confined distances along your x and y-axis. Both particle systems are dynamic using the gravity field settings (that you already know about).

Once you've set up the spatial distribution of your particles, the next step is to make the gravity field parameters inside your Isadora patch respond to the tracking system. This is a key aspect of the interactivity of your system. The options for this include a camera-based vision system like Isadora’s blob tracking eyes++ or potentially OpenNI depth imaging. In the example video by the Japanese artist, you can see the camera pressed against the bottom of the shopfront glass and a short stem of wires leading to the bottom edge, indicating the use of a tracking system.

After all, it is a comparatively simple interactive system with just the passing motion of human movement to consider. How would it respond to someone dancing into it? And you have to consider who it is for; the passerby appears uninterested, but the camera documenting as a 'witness' is the audience in this case.

Best wishes

Russell

Dear @bonemap

Thanks to your guide I was able to learn a lot about 3D models and generate different systems, including this one that goes in a different direction compared to the original examples. https://www.instagram.com/reel/C4jL42CvyQY/?igsh=M3BwZzNwZnZheHk4

My specific question now is the following: do you think it is possible to develop a system of particles/3D models but for them to be still and shake/move only when someone moves or passes by? That is to say, leaving the "waterfall" type example, of continuous movement (ascending or descending). A system that fills the entire screen but remains still until someone moves or passes by

something like this ? https://www.instagram.com/p/C5V9L2qr3Nf/?igsh=bHY5aXFqYm9zYWxw

Thanks a lot !

Best,

Maxi-RIL

Could you also use a black rectangle from the shapes actor?

Cheers,

Hugh

if you use a transparent .png picture you will need to set the "use alpha" input from the 3d player to "on" and the background color of the video mixer to transparent.

Best regards,

Jean-François

My advice is the same as @jfg, the original texture image file (jpeg or png) can be replaced with an image file filled with black color. All you would need to do is create a black image file with the same name and format in the same folder as the original image file. Then reimport the 3D sphere into the media bin.

However, if the background behind the sphere is not black you will then see the black texture. If you have a texture file that is already PNG you could add a full transparency to the texture image. With a transparent PNG file assigned to the 3D sphere you will be able to get it to disappear dependent on the parameters for showing back faces and lighting in the scene and if you have more than one 3d asset layered in the scene.

Best wishes

Russell

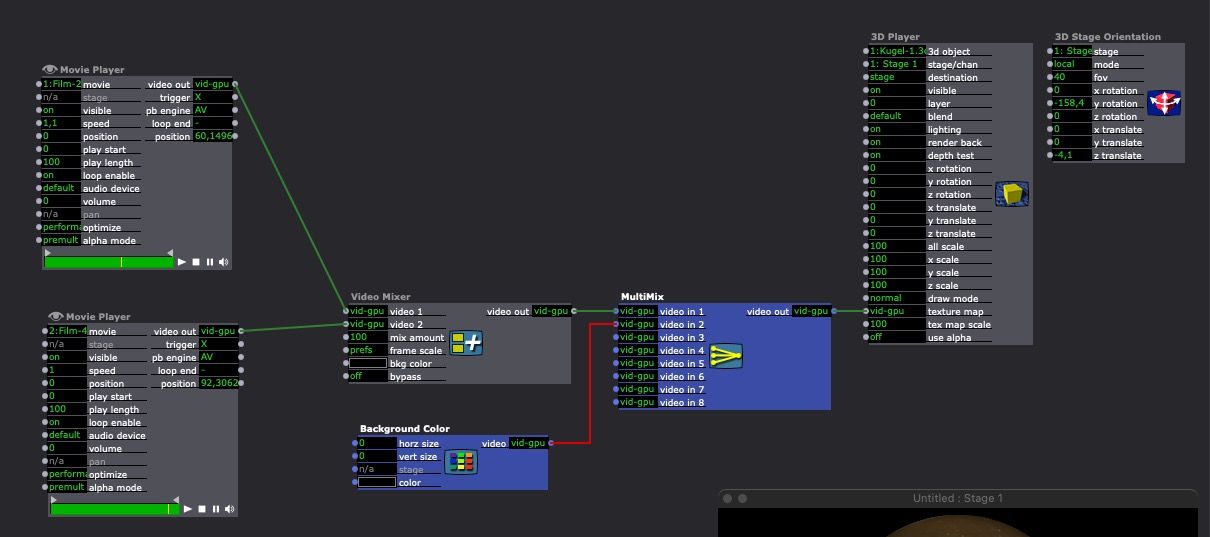

I think it appears every time there is no signal on the texture map input. I have tried with only two video player and a video mixer. If the two player are on off, I see the original texture of the 3D object. I have also tried two working solution:

1- the texture of the 3d object ist a black picture

2- add a background color and a multi mix actor after the video mixer.

I hope it helps

Best regards,

Jean-François

Hi:)I am presently working with different surfaces on a sphere, and for this I have currently used a texture on the sphere that is there simply so I can use other surfaces, that is photos and videos. The videos I need to start at a certain point, so I turn them on while fading in. This means, it is not the intention that I want to see the original texture. I just want to be able to fade in a video, that I start from the beginning. I want to be able to fade in and out different layers and most of the time, this work. My problem is presently that sometimes, if I turn the knob to fade in to quickly (and the knob also turns on a video, so it starts at the right time, the original texture of the ball shows for a split second. An now I want to ask, is there a work around to make sure this doesn't happen? I have tried to start the video a little earlier with some limit scale values on the nano controller, but I still sometimes have the problem.

Also, I found, that I can change the length of the video from 0 and in that way fade in, but then I cannot fade out with the same knob because it will also be connected with the video and the transition won't be smooth. I would like to be able to controll the timing of fade in so it is smooth with the music:)Does anyone have ideas, if it is clear what I mean? I also wonder if I need to make a new sphere texture in black, but Im not sure it will fix the problem.I hope this makes sense?

All the best

Eva

It can be done in a myriad of ways, using multiple hardware and software combinations. Neither will be easy or hard. It all depends on skill and - in the end - time and money.

Hi there,

I am trying to get a camera feed using the capture camera to movie actor from a feed from my DeckLink Quad 2 card. I have a Mac M2 Studio machine. The DeckLink Quad 2 card is mounted in a xMac Studio expansion system and connected to the Mac Studio via thunderbolt.

When I try the capture stage to movie actor that works as expected and a file is generated / replaced and it works as intended.

However, part of what I am trying to do is capture the audio, which the capture stage does not do, so I need to use the Capture Camera to Movie actor. When I try using that actor, no file appears even though it looks like it is recording.

When I switch the camera on the Capture Camera to Movie actor to a USB camera, it works as intended, creating the file with the audio feed as intended.

I have the latest BlackMagic driver and Isadora versions and my system software is updated to the latest version.

Any ideas what might be wrong or ideas for a work around?