Guru Session #13: Body Tracking with Depth Cameras (Fri May 8th, 6pm CEST/5pm GMT/12pm EDT/9am PDT)

-

Just for clarification, the Kinect actor native to Isadora has not been released into the wild yet, right? It's still only available in beta?

-

It is released in public beta and can be found at the plugin page (https://troikatronix.com/plugi...)

Be aware that the above version only works for Kinect 1 (Not the Kinect for Xbox One)

-

@juriaan I was just about to start looking into doing the same thing . I’d love to know more , if that’s ok?

-

Sure ! Will tag you in the thread that I'm planning to write about it.

-

Looking forward to this one.....been wrestling with my kinect1 for a couple of days ......

-

Looking forward to the Guru session.

Loaded up the OpenNItracker for kinect v1 and its way better than the old NI Mate from years ago. I adapted a 3D cube that I normally control with a wiimote to be controlled in 3D space by my right hand (x,y,z) and spin controlled in the left. (x,y). This patch is developed for a stationary performer using hands only so far and I noticed a couple of problem:

1- Noise- even when all limbs are completely still there is still a small float value changing causing unwanted movement. need to find a way to either gate it out with some small threshold in mind so the gate closes and stops the small values from passing or some other circuit that can ignore the tiny changing data when the performer is still. any ideas?

2- when you arm is fully extended (hand z), the xy range is very limited physically.. so need a way to mathematically progressively scale the usable range better for x and y based on the z value. I don't really know what math and actor combo would accomplish this. anyone one have nay ideas?

I have made a short demo video showing the patch in action.. and included the kinect and scaling section of my patch in case anyone is interested in adapting it or suggesting improvements to the concept. thanks:)

-

@demetri79 said:

Noise- even when all limbs are completely still

Did you try the smoothing property for this?

In terms of the 3D rotation there is a tutorial file download that provides the quaternion rotation three.js Javascript module for that: https://troikatronix.com/plugi...

Kind Regards

Russell

-

@demetri79 did you try the smoother actor?

-

@juriaan thanks

-

yes i have all the skeleton tracker outputs feeding limit scales, then into smoothers.. I should have included a screenshot of the actors for people who didn't want to open the patch and have a look.. this doesn't totally eliminate the jitteriness while allowing for a responsive reaction time.. i dont want to completely choke the output otherwise once I move my hand it will take a while before the corresponding object motion happens... just have to keep experimenting with different approaches.

the thing I am looking for is a math equation to progressively scale the hands x and y values based on its z value..

My scenario is not an actual 3D file (.3ds) mine is made from quartz composer (CI) so I can input different videos to each side of the cube.. it looks 3D but isn't actually so that javascript actor provided in the tutorial for the sphere doesn't apply to my situation. I would have tired to use a .3ds cube but you can only utilize 1 texture input in isadora correct? so that means i can only play the same video on all side as apposed to what i want is different videos on each side.. my quartz cube actually has 7 video inputs.. (6 sides + a background behind the cube). that is what I would want in the 3D word but seems unachievable currently unless i am mistaken. My version also has other added benefits like being able to have all of the dimensions manipulated in realtime with an essentially infinate Width, Height, and depth.. so just need to work out some better scaling math.

The .3ds files do have a distort parameter but it is very limited in how much it can stretch and reshape compared to what mine can do.. that is another aspect I would like to explore to see if it is possible do more extreme reshaping of .3ds files.

-

@demetri79 said:

I would have tired to use a .3ds cube but you can only utilize 1 texture input in isadora correct? so that means i can only play the same video on all side as apposed to what i want is different videos on each side.. my quartz cube actually has 7 video inputs.. (6 sides + a background behind the cube). that is what I would want in the 3D word but seems unachievable currently unless i am mistaken.

Well, if you have a single cube wrapped with one texture, you can most definitely get a single image on each side. It's simply a matter of mapping the images into the right place in the texture you're feeding into the texture map. I would use a of Matte or Matte++ actors (one for each side) to get the individual images in the right place in the texture, and then feed the final output of that to the texture map input of the 3D Player actor.

I'm sorry I can't provide an example the moment. I'm busy preparing for today's session. But maybe that's enough to get you going.

Best Wishes,

Mark -

Dear Community,

Here's the links for Guru Session #13: Body Tracking with Depth Cameras

Watch the Live Stream (also good for viewing later)

Download the session materials. (This upload will not be complete until 5:50pm! Please don't try to download before that time!)

See you a few minutes!

Best Wishes,

Mark -

Live streams says private only.Probably not started yet, but usually you had it up ten minutes before.

-

It says private session

-

@mark YouTube says the video is private!?

-

Video private

-

-

Thanks for trying to answer the question in the broadcast.

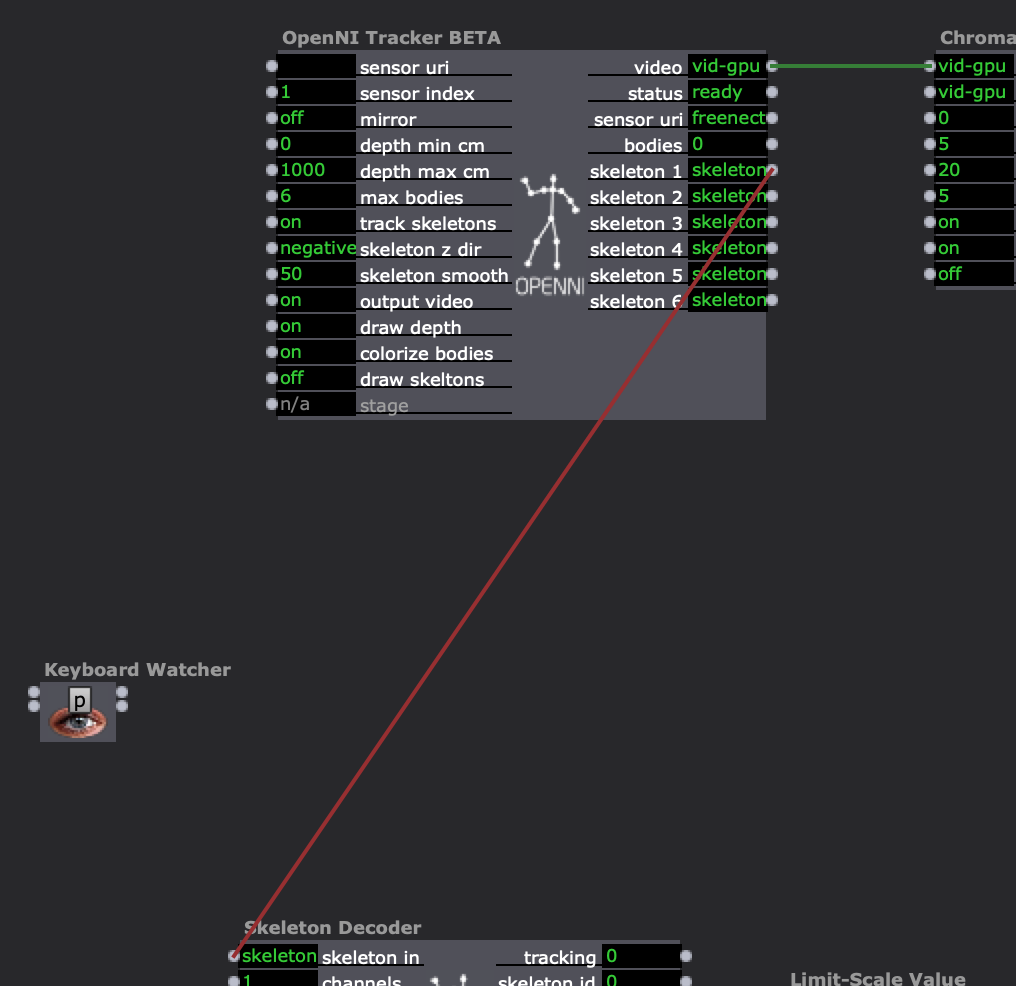

Sorry I couldn't get the kinect to be recognised, just a black screen. I have attached the Isadora screen for info. Appears to be an error on the status and on the sensor uri. I closed down Izzy and reconnected but unfortunately no joy.

Thank you

Gavin -

During the session in the chat I mentioned our research on OSC over internet for BH

Keen to see if it works will all this data from the skeleton decoder (bet it will be fine)Here's our notes in dutch (hope translate works for you guys):

OSC transmit over internet (various locations)

credits to my HKU colleagues Tjerk Stoop, Tony Schuite & Simone van Dordrecht.

-

Sadly, I still seem to have an incomplete OpenNI Tracker

(re-downloaded, got Jurriaans working one & robooted after throwing out previous versions, all to no avail.

My version of the plugins is from today 16.56)

I am connecting to the Kinect, but my numbers are in a quirky range, would love to adjust the m/cm/mm thing....Below is what I see (checked but there a no hidden properties).

Any ideas or advice?