Alpha Mask with Live Video

-

Tried the contrast and the desaturate actors and it helped a bit more but since the system of actors still revolves around luminance I unable to key out other bright spots. I am currently attempting it in my apartment right next to the window so imagine once I'm in a more controlled color and luminance environment that will help. I'm essentially looking for the magic lasso tool from photoshop for live video.

http://notabenevisual.com/?p=443The link above is to a video of the concept I am trying to achieve. My idea of thinking is that they somehow keyed out the bodies and made a mask from it and projected their silhouettes directly to the wall. -

I would go for IR lighting the screen and IR capable camera that will see the moving body as a shadow. You may need to filter IR from projector.

-

This thread has a lot of information that might help you:

http://troikatronix.com/community/#/discussion/265/tracking-two-dancers

-

THIS youtube video that Mark posted about the technology used in 16 revolutions can help you aswell.

Best,

Michel -

Hi there,

I'm in the same boat except I am using a Kinect + Isadora.I'm trying to create a similar effect as in this one created by Grahamhttp://www.youtube.com/watch?v=Spd77d6yZ-s&list=UUhr7aQm3W7xQmn4YblveryA&index=21Could someone give me a lead please? -

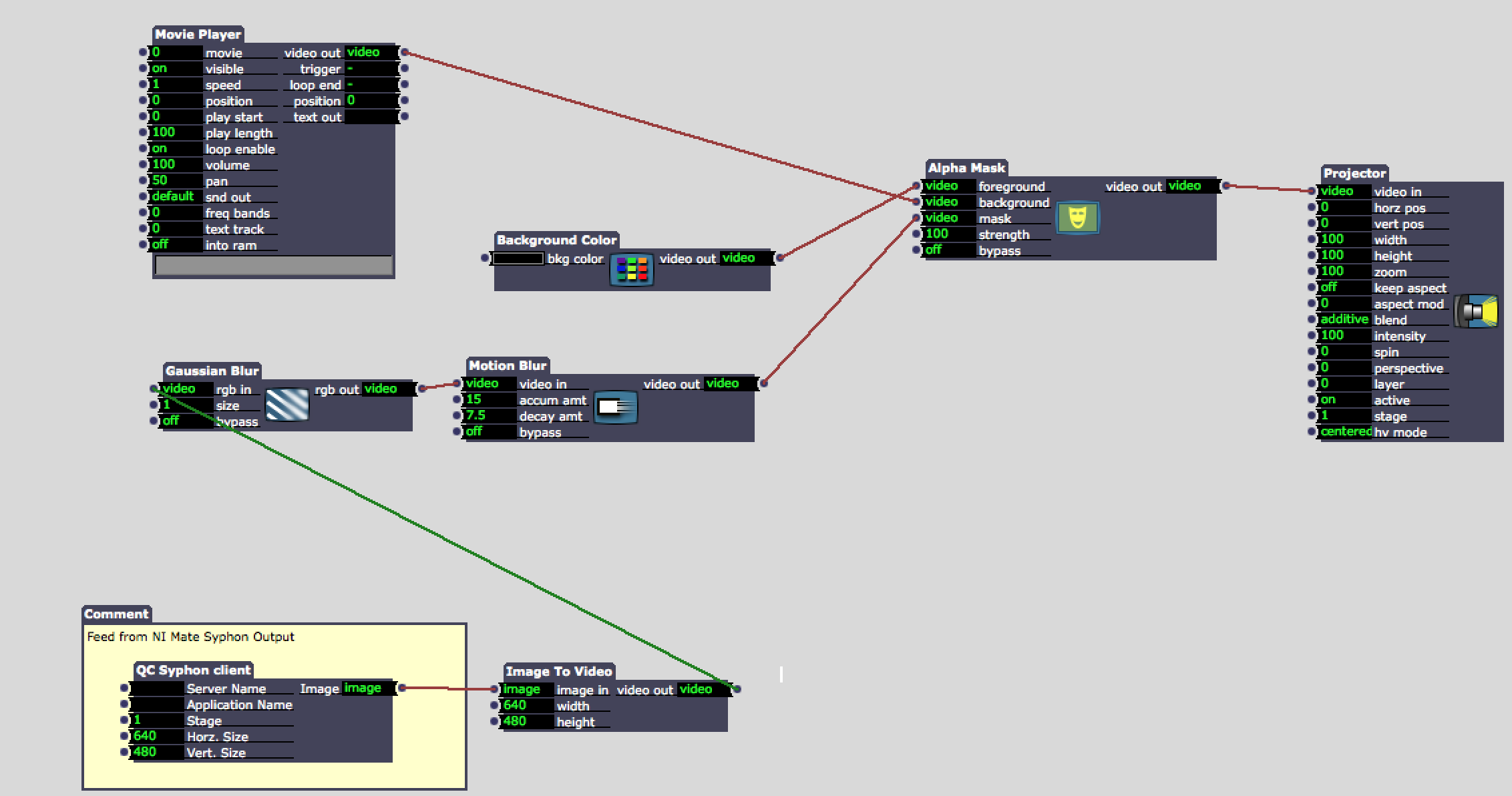

I never saved that patch it was a quick demo I did for some ex students but it something along these lines.... (open in a new tab its quite big)

-

@Skulpture I noticed in your video that the mask has a nicely defined smooth edge, compared to what raw data from kinect will give you. Do Gaussian Blur and Motion Blur do that, or NI Mate? What happens without the Blur filters?

Thanks.--8 -

Yeah it nearly always needs some blur.

I used to knock old CCTV cameras out of focus slightly to get similar results rather than adding blur in the software. But with ever increasing faster machines i started adding it into the software. Normally Gaussian Blur on 1 or 2 works nicely. -

Hi Guys,

Thanks to @Skulpture I was able to create this new interactive piece : Indicible Camouflage

I'm presenting it next week to a jazz event and I realise that in Ni Mate only the active user's alpha in active.

Is there a way to capture all alphas no matter how many peaple stand infront of the devise ?Thank you all for your great knowledge !

David

-

Glad i've helped you David. Be sure to take some pictures and I wil put them on my blog.

:-) -

Thanx @Skulpture I will do so !!

:-) -

Hello Sculpture, i tried your patch but instead of the syphon actors , I used kinnect actor and the kinnect. My goals is to project video on a dancer. Do you think it's possible with the kinnect? Wher can I find the QC syphon actors? I send you a picture of my patch, just have a look on the right side.

Thank you for all your workBest wichesBruno -

It looks like you will need a threshold actor - this will boost the white and make the black/grey more black. (if that makes sense?)Out it directly after the output from the Kinect Actor. -

hello everybody.

i have some troubles to use the kinect with my new mac book pro.i have nimate license but it crashed at launch , like synapse....i plugged the kinect on the usb3 on my mac and i think its the problem.but i dont have any other usb2 port.....do you know a solution about that?thats a lot -

I use a kinect on a new macbook with only USB3 ports and no problems.

-

Me too no problems

-

It won't be the USB ports. It will be software/driver related.

-

ok thanks for your answers. .....

-

@Skulpture

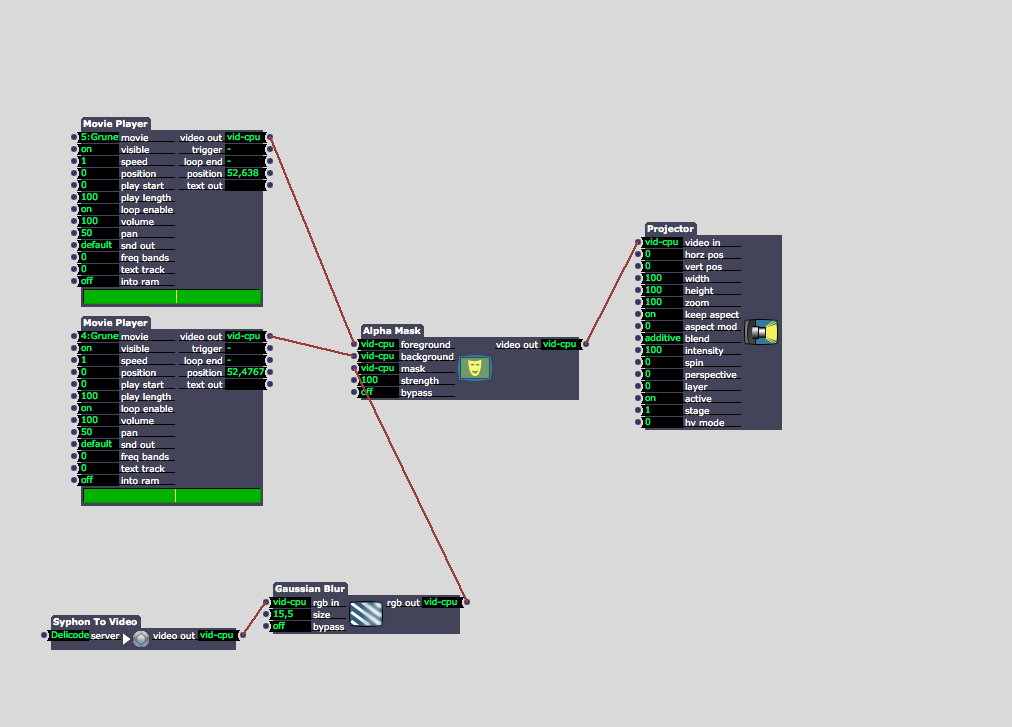

Hi, regarding your screenshot I wanted to know if the video actors are gpu based and if so, because of the core video plugin?I have a similar arrangement with my kinect. But becuase its all vid-cpu obviously its very laggy.

It's gonna be a big projection, video-installation project. So its quite crucial, that the resolution is the maximum.

I would be probably able to borrow a new mac pro for the exhibition, but it seems weird to me that my computer can only make 6-10 fps.The isadora project won't exceed this two video layers and the alpha mask probably. so its fairly simple. its just the performance, which disturbs me.

and second question: is this gone with izzy 2? Because my uni owns the v1 license and it would be probably worth to upgrade for that 125$...Thanks for helping!!!

Leo

I have MBP early 2011, i7 2,2 GHz with an ATI Radeon 6750M 1GB and 16GB Ram.

-

Hi,

Yes the screen shot of mine from March 2013 is all CPU based; I don't think I even had access to much GPU stuff back then; or if I was I was under strict orders from the boss not to tell anyone. :-)You do want to keep it as much GPU as possible. But it can get complicate; because there is no GPU alpha mask actor just yet so you'd need to convert it to CPU again.But the movies themselves could be the new version 2.0 players which should help frame rates - with the right h264 codec.I get a lot of questions, emails and PM's about this topic so it may be time to revisit it and give it a refresh.I am not sure what you mean by _"__and second question: is this gone with izzy 2"_ - is what gone? Sorry, not following.Hope this helps for now.