Body Mapping with Isadora

-

@the-symbiosis said:

Ive been trying to use Isadora for a body mapping project, where the movements of a choreographer will be captured via a kinect, and having animated content being projected to her (moving) body in real time. For this, the idea is to apply an alpha mask to the live feed from the kinect, insert some effects to the mask and project over the moving body via Mad Mapper (or Isadora itself).

Keep your eyes peeled for Isadora 3 my friend ;)

-

@mark_m said:

How funny, I have just spent the afternoon working with Desiree, the woman doing the handstand in that Klaus Obermier piece. I saw it live and it was just magical, and I think that it would be very difficult to reproduce the same quality with the Kinect.I can tell you from snooping about backstage, and a brief conversation with Obermier at the time,

Stop being so cool! You're making the rest of us look bad!

Seriously though, that's amazing. I'm incredibly envious.

-

@woland

I can't help it: I'm just naturally cool

I see that the guys who did the body mapping for Apparition are based in Berlin... maybe you can make friends with Dr. Marcus Doering...

http://www.exile.at/apparition...Here's a little more info about the system:

https://pmd-art.de/en/about/ -

Hey guys,

Wow, thanks for all the answers.

@maximortal, cheers for the in-depth step procedure, I'll surely try this one out!

As with the limitations of kinect for the tracking (and the lags faced with it), we're well aware of that... we do have a Vicon system available at our research centre, but can only get the skeleton information from it (unless someone knows any tweek to get some extra info from it? ;)

The idea for this installation is to have a performer being captured in the Vicon room, send its OSC coordinates to the stage room, where another performer shall mimic the choreography being captured via kinect. Now... if someone knows if I can do it directly with the Vicon system, that would be another story!! :)

-

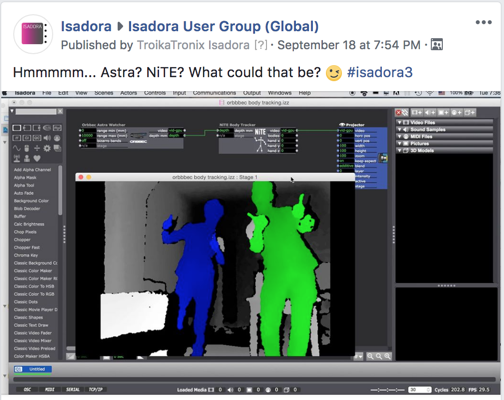

hummm, regarding the Isadora 3 I can't reach the link... :/ Can you post the image (I think it's one) here?

-

-

@mark_m truly funny as Desiree was a dancer in my Aquarium piece and a dancer in other pieces which i supported technically. i haven't seen her i years. say hello from peter/posthof/vienna ! i really mean it.

-

@mark_m said:

very difficult to reproduce the same quality with the Kinect.

I agree. I was there at the premiere of Apparitions at Ars Electronica in 2004, and know Zach Lieberman (of Open Frameworks fame) who implemented the system. So I know quite a bit of what went on technically to make this piece happen.

The biggest problem is that the Kinect's range is so limited. It simply can't handle getting a good image of an entire stage. You really need to switch to an infra-red based system and an infra-red camera, which is what they used in Apparitions (and what Troika Ranch used in 16 [R]evolutions, and in Chunky Move's Glow.) Then you have the potential to see a much larger area, albeit without skeleton tracking.

Another issue is the delay of the camera, the delay of the processing system, and the delay of the projector. The camera is always the worst, but they struggled in Apparitions with the fact that no projector they could get their hands on at that time had less than a two frame (66 mS) delay. This was critical for sections like the one you pictured above (vertical vs. horizontal stripes) because fast movements would immediately show the wrong orientation of stripe (i.e., the horizonal stripes would appear on the background instead of on the dancer.) My friend Frieder Weiss, who did all of the technology work on Glow, wrote his own camera drivers to get the delay down to the absolute minimum for that piece -- much less delay than any other system I've seen. (No, they're not publicly available.)

(As a total side note, because the two performers were from a group called DV8 which were world reknowned for their fast, extremely physical choreography, I was shocked that the movement in Apparations was relatively slow throughout. I felt fairly certain then (and still do) that this was not a choreographic choice but a simply a response to the limitations of the delay.)

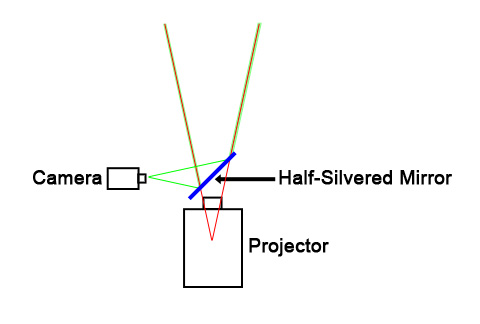

In addition, they had to pay something like 5000 EUR for a half-silvered mirror that allowed the focal point of the projector lens and the focal point of the projector to match as perfectly as possible so that you wouldn't get shadows on the screen due because of the parallax problems.

Finally, this amusing fact: to create the infrared field that could evenly span the entire stage, they focused numerous infrared heating lights -- like you've seen in restaurants -- on the rear projection surface.

Apparently the temperature backstage was blazingly hot beause of this; there was at least some fear they would melt the rear-projection surface as a result. ;-)

Anyway, watch my tutorial on infrared tracking for techniques and tips on making use of that approach to tracking. It has its own issues and there's a learning curve to make it work, but I can verify that once you get the system down, it's very reliable.

Best Wishes,

Mark -

@mark I never though to use a half-silver mirror, is brilliant, but for what I can understand focal distance of camera and projector must be the same to avoid problems with polar coordinates, or am I wrong?

-

Hi everyone, and many thanks for all your input, truly appreciated.

I should have mentioned this is a research project, more a case study regarding the bidirectional communication between systems than a performance itself.

The goal is for us to study and understand the possibilities (and “less possibilities”) to use our systems for expressive movement analysis in performing arts, and the idea of body mapping was just a “small” extra we added to increase our enthusiasm with the experience.

I agree with what @mark_m mentioned, that if we limit our ambition and keep the project simple we’ll be able of getting something reasonably convincing.

Also going to watch the tutorial on infrared tracking, thanks for that @mark.

This topic is getting quite interesting I have to say, please feel free to keep the discussion happening, as new ideas may arise from it.

Regards to you all from sunny Portugal