Multi channel audio beyond straight routing with SoundPlayer or MoviePlayer

-

Hi,

i know there is multichannel audio output available using parameters in the MovePlayer and SoundPlayer on the Mac and I have these working beautifully with the Focusrite pre 8 I am using. But is there any way to specify audio channels for sound manipulated/generated within Isadora on the Mac platform? I have looked at numerous audio device output actors but can not find a parameter for multichannel selection.

Best wishes

Russell

-

Edit: See Mark's answer below.

-

Phew!!

Thanks for alerting me to the ‘aggregate device’ option. I will try a method using these options.

Best wishes

Russell

-

Edit: See Mark's answer below.

-

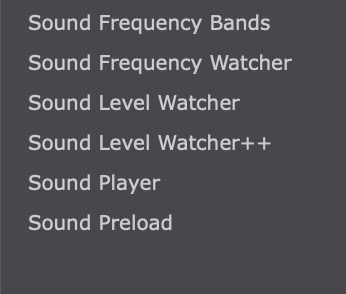

Are most the sound actors you have shown plug ins? I cant find them on 3.0.2 on my mac.

Thanks

Andy

-

@andy-demaine-iic said:

Are most the sound actors you have shown plug ins?

All of those are native audio actors on Mac. Not sure how they could not show up for you. What OS?

-

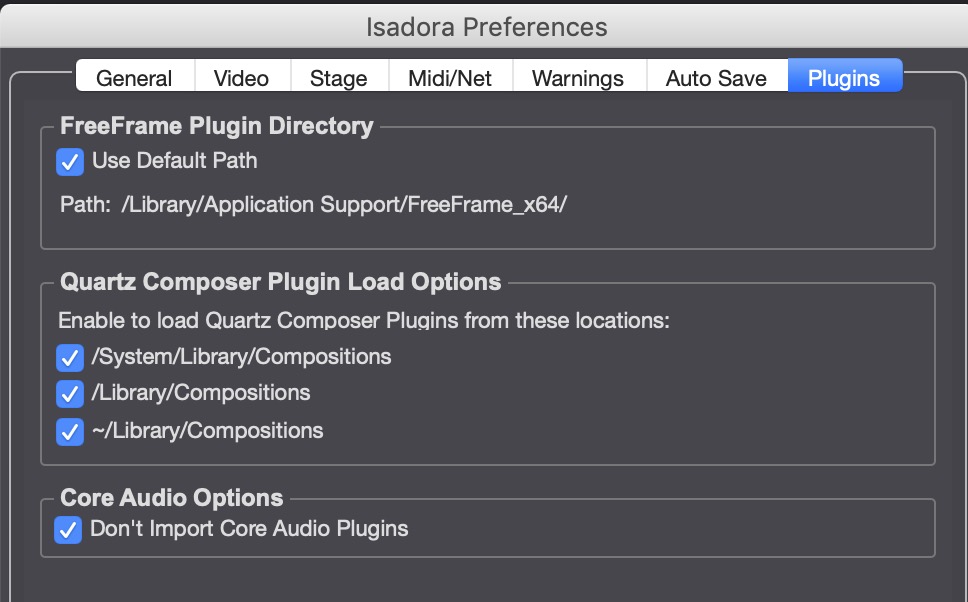

Perhaps you checked the Core Audio option in the preferences.

in that case you will see only these actors:

-

@woland said:

Success(?) Failure(?)

Hi Lucas,

Firstly, thank you for going through all of those options so methodically. The Force is strong with you.

I have also gone through many procedures hinted and highlighted by you in your informative post. But I have to say that I was unsuccessful in outputting audio to individual channels through the Mac AU audio modules.

Where the option looked most promising, in the system settings for audio setup - create Aggregate Device, I could only appended one device to another increasing the number of channels available, but it did not allow any options for mapping those channels. I could create custom names for the channels and that is about all.

These audio plugins are from Apple so they are neither the responsibility of Isadora nor do they meet Isadora's policy of moving towards cross platform equity in terms of what is featured in the application on Windows and Mac. So I do not expect any resolution anytime soon in terms of these particular set of modules available through the the Mac version of Isadora and Apple's core audio features.

One thing that did strike me as odd (but probably expected behaviour), was that output channels 'out channels' are a variable input parameter in the module AUMatrixMixer. However, indicated additional 'out channels' did not append additional outputs to the right side of the module. In addition, without the possibility of a parameter to select device output channels using the 'AudioDeviceOutput' module, the task of routing audio that is manipulated or modulated in Isadora to different sound outputs of the the selected device appears unfeasible even with a Aggregate Device setup.

The multichannel parameters for assets already recorded in a movie or sound file all look to be working well. But I could not find a way to access multi channel output using any of the AU audio manipulation modules. Of course I could be wrong about this.

best wishes

Russell

-

I can tell you that audio is on our list of things to expand in the very near future so hopefully we'll have some joy for you soon. Until then it seems the best way to go about this is to make movie files with multiple audio channels (if I understand correctly).

-

@bonemap said:

But is there any way to specify audio channels for sound manipulated/generated within Isadora on the Mac platform?

Dear All,

I congratulate Lucas on his attempt to answer this question. But there are some extraneous steps in his answer that might obscure the most important points.

That said, it is very possible to do output routing on MacOS, but it requires the use of a Core Audio module called AUMatrixMixer. I am going to do my best to make this as simple as possible given that the AUMatrixMixer is necessarily when you have a a lot of inputs and outputs.

@Andy-Demaine-IIC These features are MacOS only, because they build on the Core Audio system that is built in to MacOS. There is no equivalent built in system for VST plugins on Windows; it needs to be coded by hand and is thus a far more massive job to implement. However, adding VST support is on the roadmap for late this year/early next year.

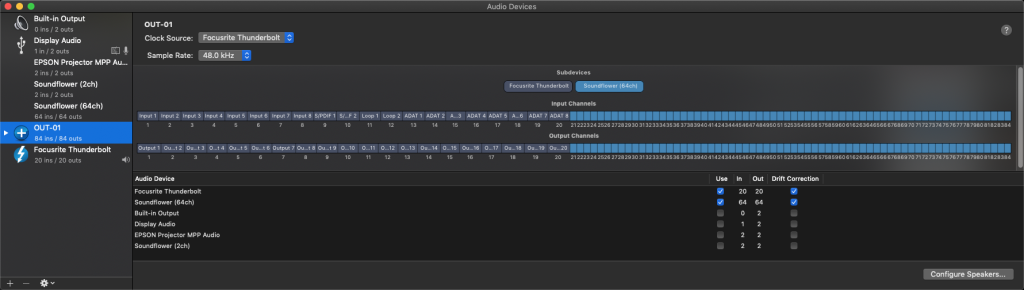

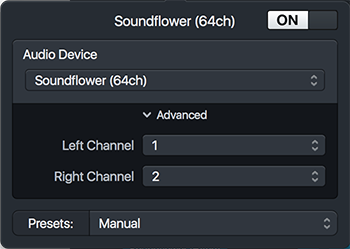

BEFORE WE BEGIN: HELPFUL TOOLS

Before I get in to this: if you don't have an external audio device and would like to try this setup, you can use Soundflower. After you install Soundflower, you'll have two new "audio devices": Soundflower (2ch) and Soundflower (64ch). Soundflower is a system that allows virtual sound input/output between apps, much like what Syphon or Spout does for video. I put "audio devices" in quotes because it's not really a hardware device, but as far as MacOS is concerned, it appears to be hardware.

Second: if you're using Soundflower and you don't have speakers hooked up, how can you see what you're doing? There are ways to do this in Isadora, but maybe easier is to download Audio Hijack Pro. (You sholdn't need the licensed version to use it for this example; the demo should work fine.)

In Audio Hijack Pro, you can easily create a setup like this to give you some "vu meters" to see what's coming out of Soundflower.

Just add the "Input Device" object and click on it to select SoundFlower (64ch) as an input and to select the inputs channels; 1 + 2 for the first one, 3 + 4 for the second one, etc.

To save you some time, I have exported this setup from Audio Hijack Pro v3.3.4 so you can simply import it and use it.

Soundflower Monitor.ahsession.zip

IMPORTANT: you have to hit record to the meters move!

I used these tools to prepare this example because I'm on the road and do not have access to a multichannel audio hardware device.

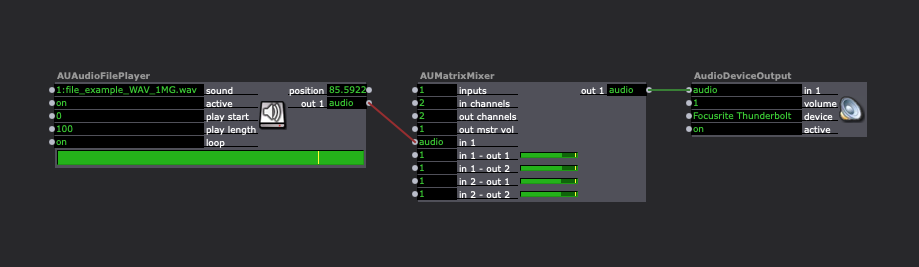

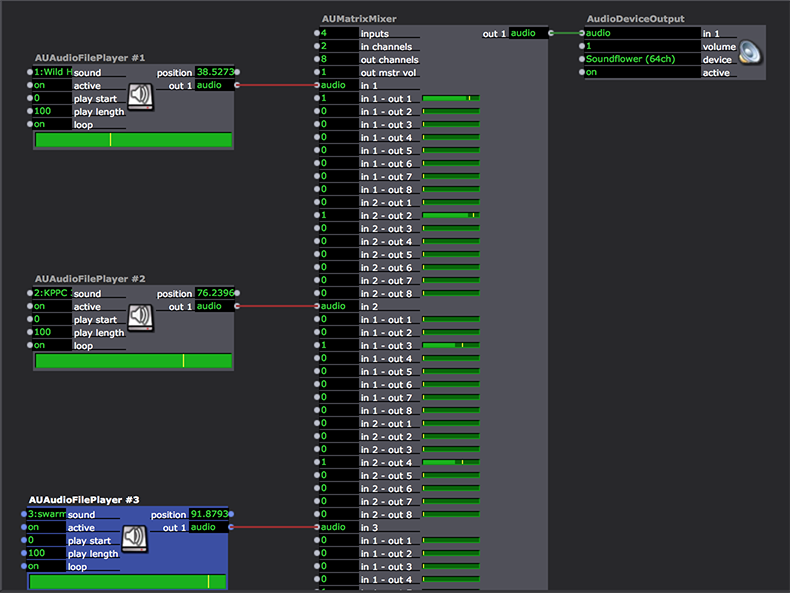

USING THE AU MATRIX MIXER TO ROUTE SOUND

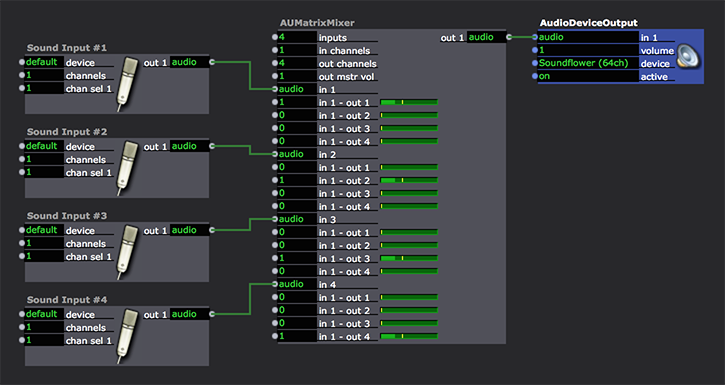

First it is important to understand the 'audio' type that passes audio from module to module. This is a stream of audio data, but unlike video data, it can contain multiple channels of audio. Here's a simple example where we mix four monophonic audio signals coming from the computer's internal microphone and route them each to an the individual outputs of the connected audio device.

Note that I've renamed each of the Sound Input actors to 'Sound Input #1", "Sound Input #2", etc so that we can identify them.

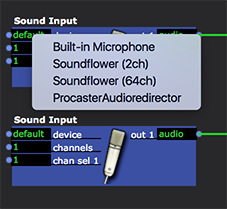

You can see in these Sound Input actors that we're taking audio input from the default device. Which device is the default is determined by Apple's' Audio Midi Setup program. You can change which device is the source by clicking on the 'device' input -- a popup menu with all availabe devices will appear.

For this example the default input was my laptop's microphone. The 'channels' input is set to 1, so the output will be a single channel. The 'chan sel 1' input is set to 1, so the Sound Input actor will grab the first (left) channel of the stereo signal coming from the microphone.

If you were taking sound in from an eight channel audio interface, you could (for example) set the 'channels' input to 4 and the four 'chan sel' inputs to 2,3,5, and 7 to grab the second, third, fifth and seventh input channels.

Like the Sound Input actors, you can choose the audio hardware device by clicking in the 'device' input of the AudioDeviceOutput actor. For this example, I used SoundFlower (64ch) to simulate a multichanel audio output device. If you have a hardware device you connected to your computer, you should click the 'device' input on the Audio Device Output and select that device.

Now, we add an AUMatrixMixer and enter the following settings:

'inputs' = 4: we will accept four audio streams

'in channels' = 1: all four audio streams will be a single channel, i.e., monophonic

'out channels' = 4: the resulting audio stream will have four channels, i.e., it will be quadraphonic.When you set these settings, a whole bunch of inputs to control the volume will appear. Each of these allows you to determine how loud the input channels will be for each possible output. So consider the first four of these, which deal only with the first audio stream:

'in 1 - out 1' - sets the volume of input audio stream 1, channel 1 for output audio stream, channel 1

'in 1 - out 2'' - sets the volume of input audio stream 1, channel 1 for output audio stream, channel 2

'in 1 - out 3'' - sets the volume of input audio stream 1, channel 1 for output audio stream, channel 3

'in 1 - out 4' - sets the volume of input audio stream 1, channel 1 for output audio stream, channel 3Each audio stream will have four inputs like this, because there is one input channel (the monophonic audio signal) and four outputs (the four channels on the audio output device.)

If you look at the picture above, you'll see that only four of these inputs are set to 1.0 (which means the signal is simply passed through without changing its volume):

'in 1 - out 1' = 1.0

'in 2 - out 2' = 1.0

'in 3 - out 3' = 1.0

'in 4 - out 4' = 1.0All other inputs like this are set to 0.0, meaning they are silent.

By using this setting, we've now been able to route the monophonic signal from Sound Input #1 to output 1 on the hardware device, the signal from the Sound Input #2 to output 2, and so on.

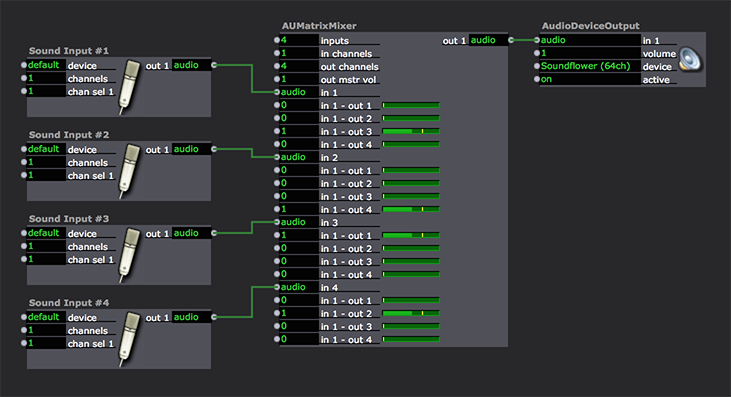

If we wanted to route

Input #1 -> output 3

Input #2 -> output 4

Input #3 -> output 1

Input #4 -> output 2then you'd have to set the volume inputs as shown below:

Hopefully that starts to make sense to you: the various volume inputs offer you the opportunity to route any input to any output, and to do so interactively if you wish.

Now (and this is where things get a more complex) let's play four stereo audio files and route them using the AUMatrixMixer. To begin, you'll need to import four stereo audio files (ucompressed AIFF or WAVE) into Isadora.

Note that the AUMatrixMixer is so big that I can't snap a picture of the entire thing. ;-)

That said, when you add your AUMatrixMixer, you will want to

'inputs' = 4: we will accept four audio streams

'in channels' = 2: all four audio streams will have two channels, i.e., strereo.

'out channels' = 8: the resulting audio stream will have eight channels, i.e., it will be octaphonic.The AUMatixMixer gets pretty huge at this point, because there are so many volume inputs to manage. In the end, here are the inputs that have their volume set to 1.0:

audio 1: 'in 1 - out 1' -- left channel goes to output 1

audio 1: 'in 2 - out 2' -- right channel goes to output 1

audio 2: 'in 1 - out 3' -- left channel goes to output 3

audio 2: 'in 2 - out 4' -- right channel goes to output 4

audio 3: 'in 1 - out 5' -- left channel goes to output 5

audio 3: 'in 2 - out 6' -- right channel goes to output 6

audio 4: 'in 1 - out 7' -- left channel goes to output 7

audio 4: 'in 2 - out 8' -- right channel goes to output 8So, using this mixer setup, we can route the left and right channels of all four stereo audio files to the eight outputs. I've done the simplest possible setup here, making sort of a "one to one" assignement of the sound file channels to the outputs. But I think you see that far more complex mixing is possible using the very powerful AUMatrixMixer actor.

To finish this example, add four AUAudioFilePlayer actors and set connect them to the four 'audio' inputs. Then set the 'sound' input on those actors to the sounds you imported above.

That's as far as I'm going to go for the moment due to time constraints here. But please go through this and see if you can replicate these setups and tell me how they work for you.

Best Wishes,

Mark -

-

@bonemap said:

Yes it makes sense but it is freakishly unintuitive.

Well, it's what Core Audio offers us; the AUMatrixMixer was designed to offer you every possible routing for any number of channels and that means it's functional at the cost of being easy. (I'd also offer that it feel unintuitive, but it is nevertheless very logical.) I could see creating some kind of intermediary actor that simply allowed you to route signals without the complexity. But I am not inclined to do it until we can implement VST support; putting energy into solutions that only work for one platform is not a good use of our time.

[OFF TOPIC] Situations like this always take me to a philosophical point: how far do we go with Isadora in terms of things supporting certain kinds of features? (3D support brings up similar questions for me.) I mean, there are incredibly powerful options out there when it comes to sound software. Do we reinvent the wheel in Isadora, never reaching the level of sophisitcation of programs that have focused on audio and been in development for years? I think the answer is to cherry pick the most important needs and address them... but coming to a determination of what those needs are is a complex process of its own.

In any case, the solution I outlined above will work. And once you've got the routing setup, maybe it's not so bad. Give it a try and report back.

Best Wishes,

Mark -

@mark said:

I think the answer is to cherry pick the most important needs and address them... but coming to a determination of what those needs are is a complex process of its own

Agreed and agreed

-

[OFF TOPIC] Situations like this always take me to a philosophical point: how far do we go with Isadora in terms of things supporting certain kinds of features?

Personally I rarely have expectations about what new features are added to Izzy. But I would like to have the insight to implement/deploy whatever existing features or functionality are lurking there and waiting in Izzy - as a potential.

Providing enough description to uncover the potential of a specific existing module is as powerful as adding new features IMHO. That is why the revelation about the AUMatrixMixer is so astonishing. Yes, it is logical for an audio technician - and anyone else, once the realisation ‘that audio, unlike video, carries multiple channels in a single stream’ and 'panning to separate individual tracks that have been forced into stereo pairs' is applied to unpack the problem. This simple statement unravels a whole chain of sequences and connections that resolve the implementation of multichannel audio. No matter how obvious that statement might appear to be!

I think, for me, I do have an expectation that what already exists in Izzy is a ‘supported’ feature and functional to the highest limit of its potential. To that end I will make noise when it appears that some parameter function is broken or appears to be not functioning as expected.

There are existing 3D modules incorporated into Izzy therefore the expectation, from a user perspective, is that they are supported. When I first approached using the 3D modules, I had no idea or insight into the extent of their potential. The joy of discovering what they are capable of producing has been a source of inspiration and a catalyst for imagining a way of working with Izzy that has lasted many years.

I have become accustomed to the statement that ‘3D is not a priority feature’. But, I would say that the existing 3D modules have so much ‘hidden’ potential that perhaps it is not a question of adding new 3D features, it is more about ensuring the current offering is functioning to a high level potential.

best wishes

Russell