Mask with mapper on output not input.

-

I have a kind of complex mapping project to do, I have 16 odly shaped pieces that I need to map on that are all on strange angles. I need to map and mask video onto them so that it keeps its geometry. The video is not made for this and the shapes have been developed and placed in a very analogue way, so there is no scans or details of their shape and offset.

What I actually need to do is pretty simple, I need to get a quad and warp it so that the video lines up visually, just to take care of the perspective, and then I want to create a mask on the output to make clean edges. I will need to then make many adjustments to try keep the scale across all the pieces and as the content is also all made synchronously and spatially matches, I need to be able to adjust the scale and position source quad easily but the mask can stay the same.

I tried for some time using the composite tool in the mapper, but this does not really work - it masks great but then if I rotate or scale the input, the output follows.

If I add another quad in the composite and try to make some subtractive and some additive, I get the same or stranger behaviour, ie geometry is locked together.

Ideally I would like to layer slices the same way I can layer slices inside a composite mapper, being able to select subtract add or invert for the "blend" mode, but between slices outside of the composite tool there is no option, only transparent, opaque or additive.

I know I can achieve this in some really in-efficient ways, but it is a bit of a tough project. I am playing a 7680 * 2160 hap AVI over 7 1280*800 projectors over 16 targets. Making virtual stages for all of these is going to eat too many resources.

I hope I am missing something really simple as it does not seem like a complex problem- any hints would be great.

-

@fred I am really in a hurry with this one, maybe @mark can give me a hint if I am wrong. Ok so it seems the only way I can make this work is to use a virtual stage per slice. Using 16 high res virtual stages is a pretty big performance hit just to mask some content. I hope there is a better way that I missed.

-

@fred said:

I have a kind of complex mapping project to do, I have 16 odly shaped pieces that I need to map on that are all on strange angles. I need to map and mask video onto them so that it keeps its geometry. The video is not made for this and the shapes have been developed and placed in a very analogue way, so there is no scans or details of their shape and offset.... Using 16 high res virtual stages is a pretty big performance hit just to mask some content.

If you've got 16 unique objects at unique angles to map onto, how would you avoid sending content to them separately?

@fred said:

I need to get a quad and warp it so that the video lines up visually, just to take care of the perspective, and then I want to create a mask on the output to make clean edges. I will need to then make many adjustments to try keep the scale across all the pieces and as the content is also all made synchronously and spatially matches, I need to be able to adjust the scale and position source quad easily but the mask can stay the same.

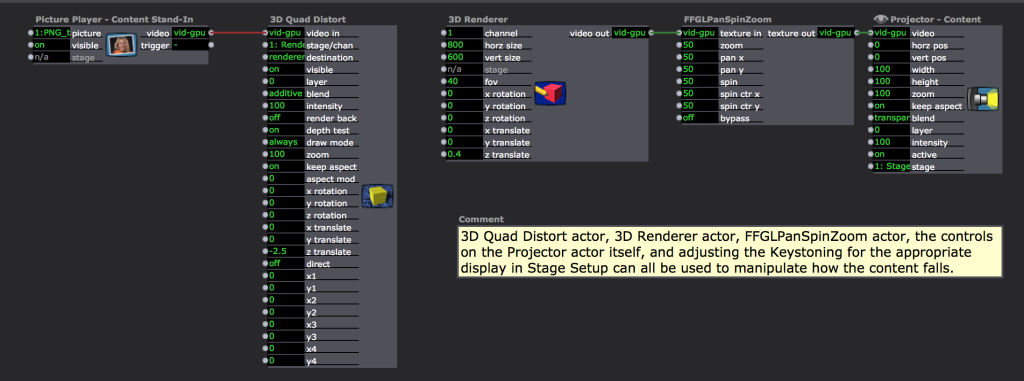

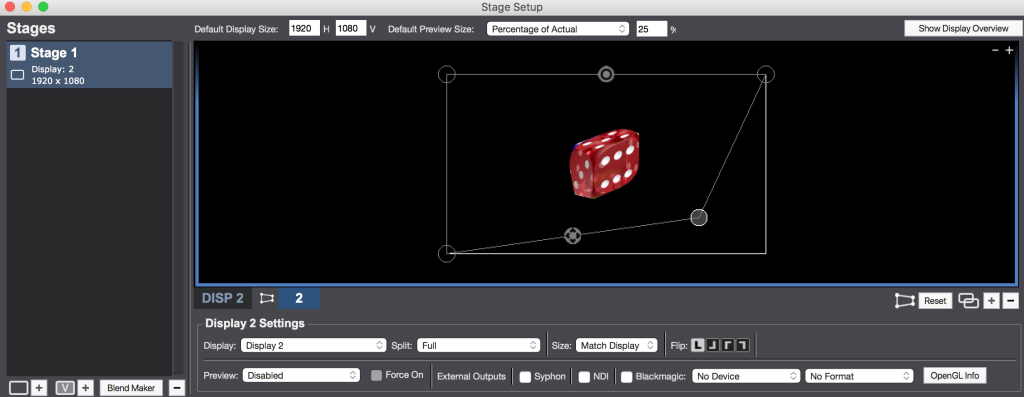

Have you tried 3D Quad Distort actor, 3D Renderer actor, FFGLPanSpinZoom actor, Projector actor, and keystoning the display in Stage Setup to get your quad and warp?

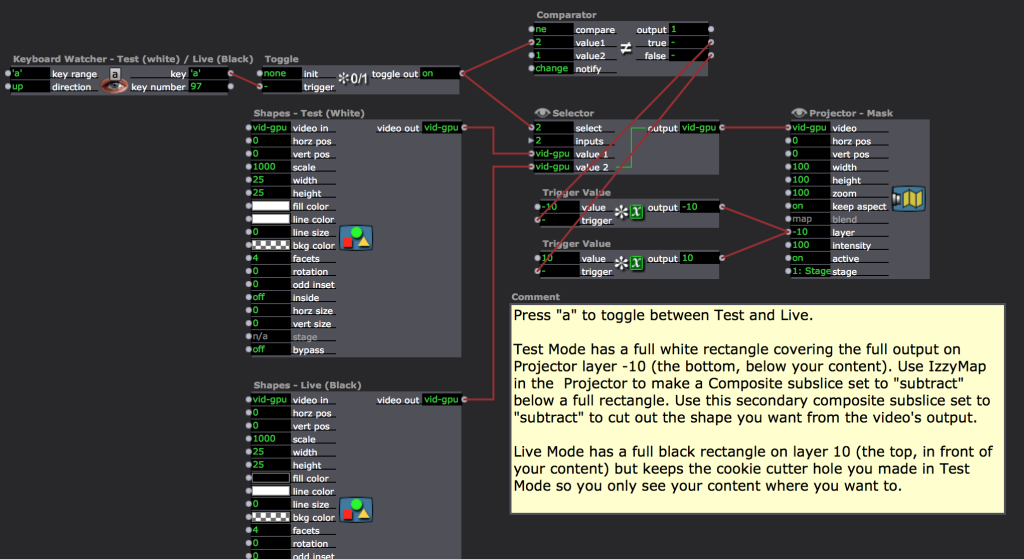

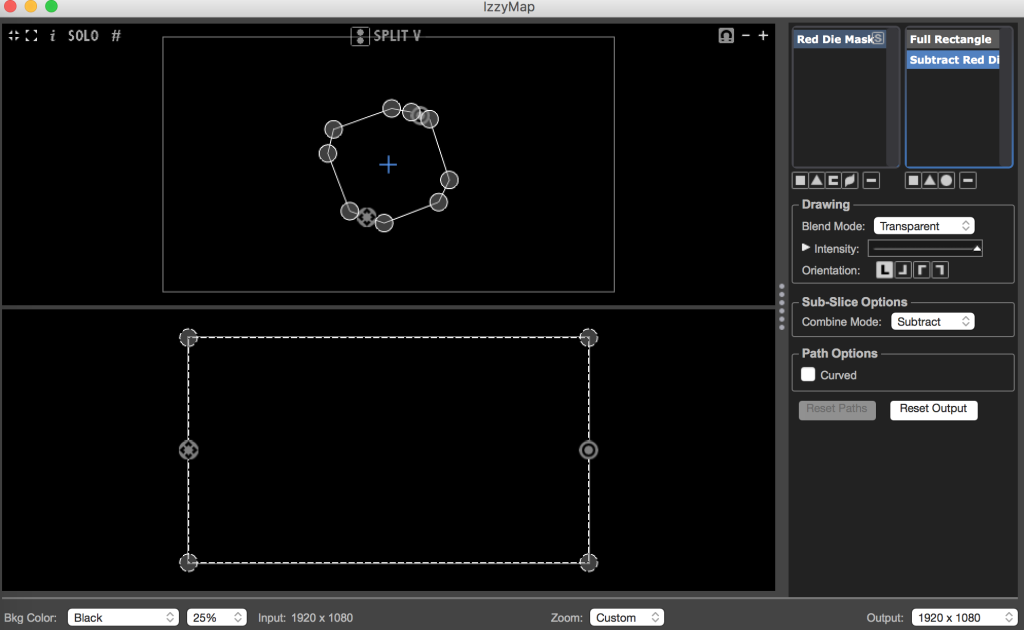

I like to make a "Cookie Cutter" IzzyMap that has a little hole for the content I want to show on a mapped Projector set to Transparent, Layer 10.

Test Mode vs Live Mode Gif: http://g.recordit.co/6lnusPGsIN.gif

You can also tweak the mask in test mode until you have the holes you want, turn off your other content, and then capture your stage to picture in order to get a concrete .jpg of your mask and then go and edit it into your video content after you use photoshop to turn the holes into alpha.

File to play with --> Fred.zip

Best wishes,

Woland

-

@woland thanks, this method is cool, for me also quite hard to manage. I ended up using virtual stages for each slice, and then sending each virtual stage to another mapper to do the masking. I prefer this mostly because the mapper interface is much nicer to use for quad warping, i don't have to build a secondary interface for the quad warping as I would with your suggestion (this gets rough with 16 slices and I don't have much time)- overall I think the solution I am using, although heavy (but I suspect without the 3d and ffgl it uses less resources than your example) keeps a pretty useable interface and one that is appropriate for mapping - especially large numbers of pieces.

I think an output mask on the mapper is a pretty valid feature and something that would make this and a few other scenarios I have faced a fair bit easier, Or also having the same blending options that exist in the composite mapper (additive subtractive and invert for all slices) would be great and allow for a lot of cool and fast variations.

Yesterday I had made a similar solution to you creating a kind of fake alpha mask with shapes, it works quite well but it is cumbersome.

To answer this:"If you've got 16 unique objects at unique angles to map onto, how would you avoid sending content to them separately?"

I only have 7 projectors, so if masking outputs on the mapper were possible I would not need any virtual stages (now I have 16) and I would only need 7 projectors (I now have 32) - my source material is one huge movie file.

As for screen capture and re-edit I need a completely flexible workflow, its mapping and will need to be checked and adjusted- the movie takes a long long time to render and I cannot wait 6 hours for masks that will need to be adjusted as the projectors and hanging slices will move a little.

While I was doing this I tried a few other things and wanted to also ask about the logic - if I have an image coming into the mapper that has alpha and I make a rectangle slice in the mapper that only has that alpha, what happens to that alpha- it is pretty much ignored in compositing at the moment, looking at the layer hierarchy logic I should be able to sample a piece of alpha inside the mapper and use it as a "mask" (depending on the layer hierarchy and settings) - this does not seem to be what is happening now, but what is the logic when I sample a piece of alpha material inside the mapper and layer it with other slices?

Fred