capturing info from multiple fast OSC messages

-

I'm having some trouble getting this to work and hoping someone can help.

I have a situation where I receive multiple OSC messages to the same "channel" very rapidly, basically in parallel. But each is in reference to a separate object, sort of a status of that object. The messages might look something like this:

/oscmsg/test 1 1

/oscmsg/test 2 1

/oscmsg/test 3 2

/osgmsg/test 4 1Where these will arrive at virtually the same time (within a millisecond or so) where we have the index as the first parameter, and the status being the second.

I'd like to "pivot" this into a set of global values.

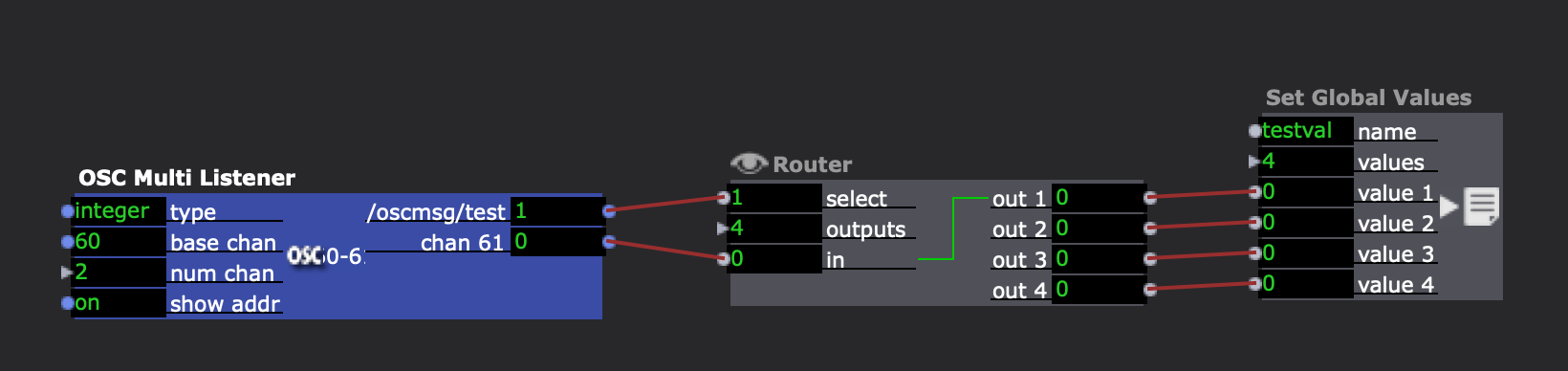

Here's what I put together, which seems like it should work:

The issue seems to be that there can be a race condition in which while the values are being set from one, multiple other messages may have replaced the old values and therefore some are skipped.

In other words, I always get the last value successfully, but usually at least one of the others seem to be skipped and fail to get set.

All of the messages come within about a millisecond, so it seems we'd need to buffer/serialize these somehow that I haven't figured out yet. I know isadora can handle really fast data, so I'm hoping there is someplace that this can reliably get each of the osc messages and route them to appropriate places.

Thanks for the help,

Bernie

-

I've just been doing some tests on this myself (for the same thing as Bernie) and - while last time I tried this I had similar results to Bernie - I am now finding that I am only seeing the last result every being reflected in the output.

This may be something to do with what else my machine is doing - but with everything I've tried I am only seeing one result from a number of messages. I have tried:- A counter incrementing on a value which changes on each value change. But when three messages are received the counter only increments once

- A text Accumulator which takes the string output of the OSC argument - again this only ever takes the final (of three) value of that argument each time.

I've had a look in isadora's logs and all the OSC messages are being logged as being seen so they do exist, I assume they are just being received too fast for Isadora to process them.

Any thoughts appreciated!Thanks

-

The General Service Task setting in the Isadora Preferences defines the speed at which the scene is processed at.

For example, if my FPS is set to 30fps, and the General Service Task is 15x per frame, the scene is processed every 2.222msSo any data coming in within that window of time will write over the previously held value.

One thing you might be able to do, is slow down the data rate, to allow your scene to process completely before new data arrives.

Depending on what you are doing, it's unlikely you need 2ms updates. Updates at say 30 or 60 fps may be perfectly acceptable.This approach may work for you.

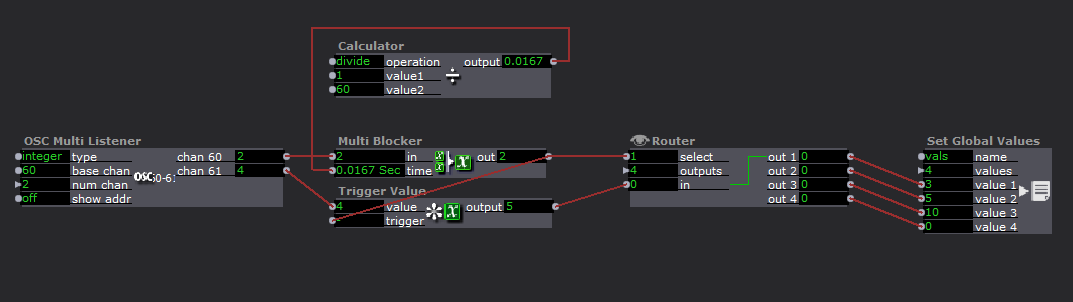

I would think you could increase the speed to (set at 1/60 here) to something just greater than the cycle rate your scene is running at.

Of course, this method will cause some data to be skipped, but with a sample rate this quick it's unlikely that you will see it.If you have control of the Application sending the data, you would be better served bundling the data further, so that every OSC multi-message contained a full set of your data.

-

It would be interesting to see what OSC data you have coming into Isadora by accessing a screen grab of the monitor window while you are receiving OSC from your source. @Dusx solution is really interesting, and there might be another way of organising the data based on what you are receiving.

Best Wishes

Russell

-

I'll come out of the woodwork and state that in this specific case @peuclid is referring to a new feature that Liminal is adding to ZoomOSC that allows for the sending of full frames of information about every user in a Zoom call so that various stats about that user can be leveraged simultaneously for the sake of reconstructing user profiles within the integration platform (Isadora in this case). We are sending these successive OSC packets under the same address because our next update will be moving the software to a more "pure" implementation of OSC standard practices. @DusX has a great solution for "analog" input where dropping a few samples here or there would not create a noticeable difference, but because these packets describe user profiles, any loss would be reflected as a gap of all statistics for a given Zoom participant, which is an issue for online performances. The other software solutions we integrate with do not seem to face these challenges; maybe they log the OSC packets in a different way.

I'm going to meet in the middle here, adding a parameter to the new user interface of ZoomOSC to set the output sending rate such that, when used with Isadora (and when leveraging the General Service Task feature as needed to find a happy medium) the programs will communicate successfully. We love Isadora and want to make sure it can fully utilize our new features.

I have a lot of respect for what Mark is doing to make OSC accessible to the end user in Isadora, and I think I can make some specific feature requests to TT that could ideally retain the intuition of the Isadora OSC workflow but add more compliance with the OSC standards (sanitization of args and addresses, how packets are stored and constructed, etc.).

We'll keep you posted.

-

@DusX I promised to get back around on this, and now I have! Thanks to @mark's latest developments, I can now visually illustrate this issue. Note how the general service task is well within bounds for most of these tests, and yet the only delay value that seems to actually impact my results is the final jump, from 10ms of delay to 100ms. At 60FPS with a 30x General Service Task, we should be updating roughly every 1.8ms, so I understand why 1ms and even 2ms could cause issues, but 5? 10? and the general lack of improvement when moving from 1 to 10? That seems like there is some spike of activity after the first value is received preventing subsequent values from propagating.

The reason this will eventually matter is that the user may have hundreds of participants on the call, and with roughly 100ms between list replies in the initial setup, a 600 person Zoom call could take over a minute to get Isadora up to speed. I am using the new control to illustrate, but there are other uses unrelated to control where this could be a larger issue.

(Ignore duplicated usernames, the 100ms result is the expected result).