[ANSWERED] OPENNI Line Puppet - Image Puppet/Guru 13(3D Track Hands edit)

-

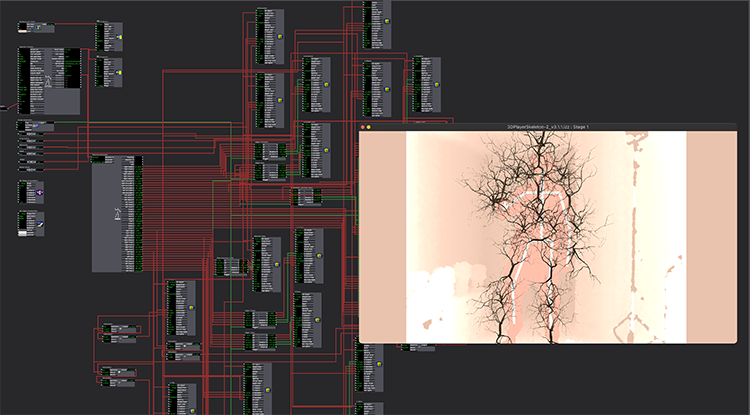

My goal is to make a "paper" puppet character that's mapped to the data from the skeleton decoder (Below is a link to an early test). It seems like all of the math is being done through Bonemap's OPENNI Line Puppet user actor, but I can't understand it well enough to track individual images to each line segment. Any thoughts on ways to approach this?

The amusingly clumsy version linked below, was done by modifying the "3D Project - Track Hands" cue of the Guru 13 file. This would work well enough for me, except when I try to copy the modified cue into my own project file it no longer works. The outputs of the "3D point to Rot/Trans" actors don't change. When I double click the javascript "3D points to Rot/Trans" actor there is an error with the JavaScript: "Javascript Error Line 1:Error - Unexpected error reading include file 'three.min.js'"

If I could get the altered file to work within my project that would be great. It does seem, though, like the puppet line actor is doing most of the work for me, and if I could figure out how to bridge the gap from line segment to an image tracked to it, I could get a cleaner result.

Any help would be greatly appreciated.

Best,

Rory

MacBook pro 13", M1, macOS Monterey | Isadora 3.1.1 | Kinect 1473

-

Hi,

Hey, that paper puppet movie is fun!

There are a number of ways that you might consider taking on the issues of constructing your puppet. And it looks like you have done well so far!

The 3D line puppet that you refer to, has the advantage of being constructed with numerous 3D Line modules (if I remember correctly it's 26-27 unique 3D Line actors). The advantage of these actors is that they can use the raw tracking points to describe the orientation of the line in 3D space, thus recreating the line skeleton. Therefore the connection lines can flow directly from the Skeleton Decoder to the start and end points of the 3D Lines actor to create each 'part' of the puppet. If you are following me so far that is great. It is because the 3D Line puppet is described by the skeleton tracking points, there is no requirement to translate the rotation of body/puppet parts to create the line puppet...

However, when you start to use images or 3d geometry (3ds files) as the body parts of the puppet you then have the need to work with rotation points and the 3D Line method is no longer applicable - unfortunately. The modules that allow the manipulation of the image and 3d model assets do not have the parameters for directly linking the start and end tracking points.

So what can you do? Well, you can continue to deconstruct the example patch that uses the three.js or look at some of the other example patches uploaded to this comprehensive forum discussion about creating a motion tracking puppet in Isadora.

To provide some perspective when using an image there is a central rotation point by default, so perhaps the best you can do is calculate the centre between tracking points and then apply rotation based on the Calc Angle 3D actor. As I have done in the example skeleton tracking patch here... This patch uses 3ds geometry models, so does not match explicitly your project, however, I hope it provides some context for solving your patch.

Best Wishes

Russell

-

Hi there,

Regarding your question about "

This would work well enough for me, except when I try to copy the modified cue into my own project file it no longer works. The outputs of the "3D point to Rot/Trans" actors don't change. When I double click the javascript "3D points to Rot/Trans" actor there is an error with the JavaScript: "Javascript Error Line 1:Error - Unexpected error reading include file 'three.min.js'"

You have to also copy the three.min.js file inside the same location as your patch lives. Three.min.js is an external dependency.

-