[ANSWERED] Waterfall (particles) simulation

-

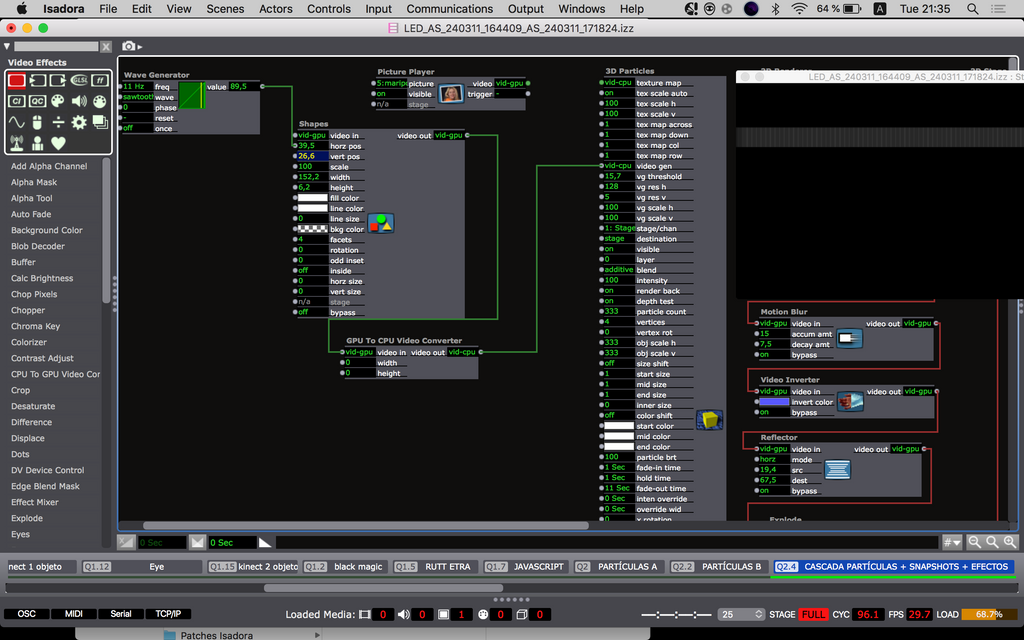

There is actually a way to generate particles across the whole x axis simultaneously by using the Video Gen input. if you use a Shapes actor to generate a line across the top of the screen and convert this to CPU, you can send this to Video Gen. Change the vertical resolution of the VG to something really low. Its a bit less predictable than generating particles by location, but should work. the video input needs to be something that is constantly moving, so i cheat this by vibrating the shape using a Wave Generator.

-

@dbini oh what a great news you gave me !

So you say I must use the GPU to CPU converter at the shape's video output? Or I missunderstang?

Thanks a lot !

Best,

Maxi

-

@dbini If you can point me in the right direction. Im doing something wrong. At times something happens but it's not right. I don't know how to use Shapes properly (among other things haha)

Thank you !

Maxi -

that patch is looking OK. i think it would help if you increase the Particle Count to something like 10,000. the VG system generates a lot of particles and, if yours have a lifespan on 11 seconds, it will soon reach its limit at 333.

also - i thought it might be good to use a more varied video input instead of Shapes. maybe it would look better with a line of text that is constantly changing - this might give you a more organic stream of particles. -

Hi,

Love where this thread is going. Here is a demonstration patch that shows a method of live particle interaction using 'Gravity Field' parameters. It isn't optical flow but it mimics the effect demonstrated in the TD video. I have used a couple of shape actors here, but also tried it with iKeleton OSC input and it worked great with human body tracking driving the shapes.

interactive_particles.zip

Best Wishes

Russell

-

@bonemap It looks incredible ! thank you

I see that you used the 3DModel actor, instead of the 3D particles like I was doing

Now the question would be how to replace those shapes with human interaction? to reach the same visual result.ps: at least on my computer the 2 shapes with the Path macro bring the Load level to 80 percent

Best,

Maxi-RIL -

Hi,

I would have to say that using Isadora particles in general requires a lot of cpu processing power. It is not the Shapes and Circular Path that is creating load - it is the particle system and the real-time interactivity computation. On my machine the load is under 40% but I would say it is still high. You can reduce the load by reducing the number of particles and reducing the rate of the top pulse generator. Many of my patches that include particles are carefully managed as they can quickly load the cpu. There may also be memory leaks and bugs in these old Isadora modules (that are arguably due for an update).

The other question you have about human body tracking is something you can tackle from several angles. However, I would be developing the body tracking and then bringing it to the particle system. For example, I believe skeleton tracking is well documented for Isadora. I used the iKeleton OSC iPhone app wrist parameter stream to move the Shapes actor in my interactive particles patch using my hands. The wrist OSC x and y data is connected to the horizontal and vertical position inputs of the shape actor and then calibrated using Limit-Scale Value modules.

You can try connecting a live stream of your performer directly to the Gravity Field video input of the 3D Model Particles actor it has a Threshold calibration parameter there as well. However, if your computer is already showing a high load with my simple patch it is only going to get worse asking it to compute even more.

Best wishes

Russell

-

@bonemap thanks bonemap!

I did a quick test and the Video in Watcher turned out to be more efficient than the 2 shape actors (with the Threshold almost at 100 %)

What I don't quite understand is that "grid" of square points that appears if I increase GFh and GFv. I understand that it is the resolution of the GF of the video input but what I still don't understand why they are seenps: Other important variables: lighting and distance of the object/body with respect to the camera and amount of threshold

Best,

Maxi

-

@ril said:

You are right about the efficiency of the Video in Watcher. This is an excellent option for live-stream video interactivity. Thanks for trying it out. One reason it works so well is that the settings I have used for the gravity field parameters are set so low in terms of resolution. This is great to remember for future project development!

I understand that it is the resolution of the GF of the video input but what I still don't understand why they are seen

There is a parameter to hide the dots 'gf visualize' can be toggled on/off. The important purpose of this is so that you can accurately calibrate the simulation to the input video using the 'gf scale h', 'gf scale v', 'gf res v' and 'gf res h'. In my example, it was critical to see the gravity field dots align with the shape actors' input video (and I had forgotten to toggle off the parameter before posting the patch).

It's great that you are progressing with the gravity field video input. Please post some discussion of how you arrive at an outcome.

Best wishes

Russell

-

I did a second quick test using Mark Coniglio's video of the hand with a black background and your patch works incredible!

What you would have to think about/achieve is in an installation situation how to track bodies efficiently without having black backgrounds and defined shiny objects like in Mark's video. With that we can acomplish to start developing immersive situations with Isadora!!

Best, Maxi-RIL

-

Previously, I have used several methods for isolating performers. A depth camera (Kinect etc.) video feed because this isolates the human figure by calibrating a depth plane/distance, i.e. OpenNi. However, you can also use the 'Difference' video actor to isolate just the moving elements of a live video input. There are also thermal Imaging cameras (I have an old flir sr6).

Best Wishes

Russell

-

@bonemap the Difference Actor made the "difference" ..works great !

ps: How do I insert the video right here so you can see it?

-

Great to hear what a difference makes!

If you want to share a video you can try one of the following:

Upload to YouTube or Vimeo, and then paste the link here.

Take a screen grab with a gif-making tool like Giphy Capture and drop the .gif file into the forum thread (if you do this, you will need to keep the .gif file to under 3 mb, or it will not upload)

Best wishes,

Russell

-

.

-

-

-

Dear @bonemap

Thanks to your guide I was able to learn a lot about 3D models and generate different systems, including this one that goes in a different direction compared to the original examples. https://www.instagram.com/reel/C4jL42CvyQY/?igsh=M3BwZzNwZnZheHk4

My specific question now is the following: do you think it is possible to develop a system of particles/3D models but for them to be still and shake/move only when someone moves or passes by? That is to say, leaving the "waterfall" type example, of continuous movement (ascending or descending). A system that fills the entire screen but remains still until someone moves or passes by

something like this ? https://www.instagram.com/p/C5V9L2qr3Nf/?igsh=bHY5aXFqYm9zYWxwThanks a lot !

Best,

Maxi-RIL

-

Hi,

I hope you are well and in good spirits. Of course, you can use Isadora for a similar style of interactive display. In your example by the Japanese artist there is the appearance of a lot of particles in a thick patterning. Consequently, considerations around patch efficiency will likely be critical. Alternatively, consider a layered approach, for example, recording a particle scene to video and then compositing this video behind your interactive particles in a new scene. In this way you increase the visual quantity of particles but the real-time interactivity is optimised to a top image layer. You can also do calculations to determine the maximum number of particles that can be present without affecting your frame rate. Use the ‘Performance’ watcher module to help make the calculations based on what is going into your particle system's frequency and life span inputs. You may have noticed that the number of particles input is not dynamic, and resetting this will kill all currently active instances. So, it is a setting that needs to be calculated and set at the start.

Regarding your primary question, numerous exciting and dynamic ways exist to determine and control the spatial placement of your particles in the 3D viewport of your scene. This flexibility allows for creative experimentation and can enhance the interactivity of your display. Here are some examples I have shared with the Isadora User Group on Facebook:

https://m.facebook.com/video.p...

here is a demonstration patch for that: demo-particles-04.zip

https://m.facebook.com/video.php/?video_id=2138613486192707

These two examples use an external source for the x, y, and z positioning data for 1: a grid and 2: a sphere. These data sets were generated using Meshlab software (open source and free). Alternatively, the distribution of particles can be randomly generated by wave generator modules set to random. You will want to spread the particles in confined distances along your x and y-axis. Both particle systems are dynamic using the gravity field settings (that you already know about).

Once you've set up the spatial distribution of your particles, the next step is to make the gravity field parameters inside your Isadora patch respond to the tracking system. This is a key aspect of the interactivity of your system. The options for this include a camera-based vision system like Isadora’s blob tracking eyes++ or potentially OpenNI depth imaging. In the example video by the Japanese artist, you can see the camera pressed against the bottom of the shopfront glass and a short stem of wires leading to the bottom edge, indicating the use of a tracking system.

After all, it is a comparatively simple interactive system with just the passing motion of human movement to consider. How would it respond to someone dancing into it? And you have to consider who it is for; the passerby appears uninterested, but the camera documenting as a 'witness' is the audience in this case.

Best wishes

Russell

-

@bonemap thanks for that fast response !

What do you mean by use the ‘Performance’ watcher module ? And how to achieve this:You can also do calculations to determine the maximum number of particles that can be present without affecting your frame rate.

thanks again !

Best,

Maxi-RIL

-

Hello,

For best results when working with particles you can adjust 'frame rate' and 'service task' properties in the Isadora settings (menu: Isadora/Settings).

Set preferences that will be best for the capacity of your computer. For example: Target Frame Rate - 30 FPS and General Service Tasks - 5x Per Frame.

Once you have made your setting enter the same values into the 'Calculate optimised Pulse triggers and Particle Count' User actor. That is included in the demonstration patch available here.

This will calculate the available frequency range for your particle parameters based on Isadora Preference settings.

So...

1/ The pararmeter for the Frames Per Second is first set in the Isadora Preferences.

2/ The parameter for General Service Tasks is first set in the Isadora Preferences.

Calculate the efficient number of particles based on the Pulse trigger frequency and total life span of the particle.

3/ set Fade-in time

4/ set Hold time

5/ set Fade-out time

RESET the user actor.

Keep an eye on the LOAD rating and if it goes too high reduce the settings in the Isadora Preferences and reenter the new settings accordingly.

You will still achieve an acceptable particle effect at Target Frame Rate 24 FPS and General Service Tasks - 12x Per Frame. If your computer is struggling try Target Frame Rate 15 FPS and General Service Tasks 15x Per Frame.… be kind to your computer!