Second screen out to an Iphone

-

Hi, I needed to come up with a VRish game, so I started experimenting with some simple VR iPhone apps. I liked the Idea of wirelessly using them with an iPhone, however a problem was, starting the app and video wasn't very easy, and most of the graphics looked cheep. So I thought maybe for a first simple test I would use Izzy, then I could have control over starting and stopping the video, also get a quality video full screen out and a low quality left / right eye output to an iPhone.

1st problem: The "Air Display" program can't handle much bandwidth and there's a strange codec thing going on with the phone. If I use anything other than exporting to the .3gp the video is bad.

2nd problem: Izzy doesn't read the .3gp even if I can settle for the low quality video for the phone.

And yes I know it's not really VR, it's just for a game.

-

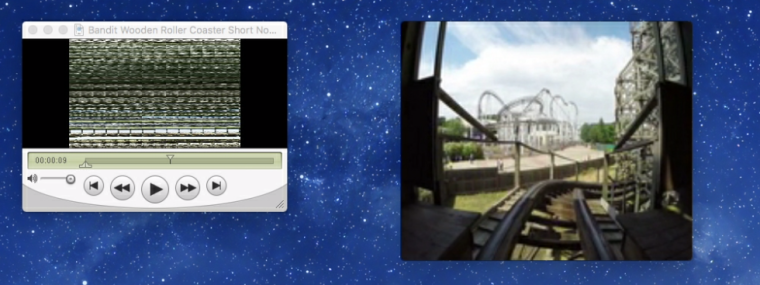

I forgot to add, the pic is of the iPhone just dragging a quicktime video over to it. Left is a Mpeg-4, Right is the .3GP

-

1) I've done this with TCPSyphon, and can recommend that, though now I'd say that an NDI solution is probably better, maybe with Sienna's apps.

2) Someone once suggested writing a GLSL shader to display a 360 video (which may be simpler if you know how to do it), but I've been doing 360º playback by building a simple 3d sphere in Blender, using the video as the texture, and putting a virtual stage "camera" at the centre of the sphere. That works fine for me, where I'm just panning around in a 360 image/video.

-

I would be very interested to try out what you described in terms of the Blender sphere with the 360 degree video texture and a virtual stage "camera" inside it, if you're willing to share your method. If not, no worries :)

-

I don't have the patch with me at the moment, but I'll share what I can. It is, in fact, fairly simple. Any 360º video player just maps the image to a sphere and lets you pan around with a virtual camera.

The very first thing I did is pretty easy to replicate. I found a random equirectangular 360º image and a .3ds Moon model on the internet, stuck them together in a 3D Player, and it worked. Mostly. You'll need to set the 3D Player's destination to renderer and then create a Virtual Stage as your camera. There's a bunch of angle/position settings to adjust, but it's fairly straightforward.

What I'm doing now is a bit more custom - I'm taking multiple live camera feeds and combining them to get a live partial-360 panorama. The 'sphere' is actually made up of multiple curved surfaces, each with its own 3D Player, and custom-made for the shape of the camera lens. That gets a bit tricky, but it works pretty well.

I'd never used Blender before working on this, and what I use it for here is pretty basic and easy to get the hang of. This tutorial video was a huge resource, though obviously I diverged a bit from it.

Feel free (anyone) to email or message me too if you want to talk about it. I'll try to remember to post the patch when I get back to my desktop next week.

A couple tricks:

- adjust y axis (tilt) with the 3D Player, and x (pan) and zoom with the Virtual Stage. Using the Virtual Stage for both pan & tilt is... non-intuitive.

- the default settings for a Virtual Stage include a small positive value in the Z direction. Setting this back to zero should center the camera.

- Whatever I'm doing to make the blender models, the texture always winds up on the outside of the sphere (which isn't visible from a camera at the centre of the sphere). Rather than trying to fix my 3D model, I decided to just check 'render back' in the 3D Player settings. Easy.

-

I'll test these ideas,

Thank you.