Integrated graphics for work monitor?

-

Follow up question to this thread: https://community.troikatronix.com/topic/6780/new-pc-to-connect-4-projectors/

In Windows, is there anything wrong with using the integrated graphics output from the motherboard for the work monitor and keeping all the GPU outputs for projectors?

People seem to be passionate about both answers, so I wonder who you think?Cheers,

Hugh

-

It depends what you have on your 'Work' monitor.

-

Okay, by work monitor I meant the Isadora interface and anything connected to that.

Thanks,

Hugh

-

@citizenjoe yes I am also interested in that.

@mark maybe you can help us out with some questions about multiple GPU's.

I know that adding extra video cards is a possible minefield, maybe we can clear a few things up. Seemingly if images are not needed to go over both video cards then things can be OK - so lets say you have 2 cards and each is feeding 3 video projectors. If all three VP's are showing a single video file spread over 3 outputs that is 1 openGL context. If the setup for the next three VP's is similar, a separate video being split over 3 outputs is a second openGL context. This would logically seem safe and efficient but I am not totally sure how Isadora handles this - correct me when I get it wrong.Creating a shared openGL context requires moving data from one GPU to another a costly process that may cause more harm than good- seemingly this is something that NVLink is meant to take care of (the updated form of SLI but it is aimed at moving data between cards, not having both cards share rendering processes). I don't have NVlink on any machines so I don't know if it helps with a shared openGL context.

When it comes to the monitor showing only the Isadora interface a few things come to mind, if I extend the scenario I started above lets say there are 2 GPU's and a CPU with an integrated GPU, and in this case the monitor showing only the Isadora interface is connected to the onboard GPU via the motherboard output. The first issue that comes to mind is that we can hover over video connections in the Isadora interface and see a small preview of video streams, this means that the onboard GPU needs access to the same openGL context that is used for both cards - this seems like a lot of travel. Also things like the monitor on eyes or eyes++ also has a preview - again a shared openGL context. Lastly showing stage previews or using monitors on the control panel will also require a shared openGL context so again copying of data back and forth.

If I am correct about these assumptions can you let us know about a few things. Can a shared openGL context be dynamically created and destroyed - so when I do hover over the connection to see a video preview the shared context is created to let me see the preview and destroyed to return to getting full power from the GPU's when the preview is not shown. And the same question for showing stage previews on the monitor showing only the Isadora interface - if I switch to previews on the monitor showing only the Isadora interface and no outputs on the actual GPUs what is happening with the openGL context?I suspect that the context is not dynamically created, but wonder (if my assumptions are correct so far) if it would be useful to have a multi-gpu mode, where stage previews don't use a shared context but switch to either the GPU for the control screen or the output, and maybe the hover over the connection preview is disabled to make sure that the GPUs are not wasting resources.

Another question is can Isadora take advantage of NVLink. This may rule out the efficient use of an onboard GPU output for the monitor showing only the Isadora interface, but if this is an efficient way to use multiple GPU's it could be a step toward getting a lot more outputs easily.

Or alternately maybe I understood this whole thing in the wrong way - at any rate it would be great to have some clarity, and even better some performance results from multi-gpu systems in various configurations (and maybe VS single GPU systems with things like a datapath instead).Fred

-

I have a gaming laptop where the built in display can Only be connected thru the integrated Intel gpu, while all othere run thru the nVidia gtx 1070.

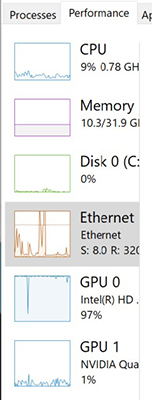

As long as I tell Isadora.exe to only use the nVidia card... it runs well. I can put Isadora on my main display and watch task manager to verify low Intel usage and higher nVidia usage while running a show.

So yes you can use them together. Personally I wouldn't buy a machine with this config again though. I would prefer a laptop with a single gpu, however these are hard to find.. only laptops built on Clevo kits seem to offer this these days. (Sager, Eurocom, and others)

Isadora offers the video thumbnails on links and control panel previews (and more), which both need access to the video. How exactly does this work on a system with 2 gps where Isadora.exe is told to use the dedicated gpu? I don't know if Isadora is rendered on the dedicated gpu and then the image is transfered to the intel gpu or if the Intel card does some rendering. In either case some texture/s are transferred.

I think how exactly this works is something that might even be system specific.

-

@dusx laptop's are a very different question, th y have some more specific hardware switches and often can share physical outputs with GPUs. I am much more interested in tower machines with separate physical outputs.

Edit I re-read your post to see that the config you have means the screen is specifically on a separate GPU, my mistake.

-

-

So, in Vietgone, on the #3 desktop in my signature, I was running 6 projectors/stages out of the GPU (with the aid of a Matrox TH2G) and the "work" monitor from the integrated Iris 630 graphics on the CPU. I had to do it because I couldn't run another monitor from the GPU having used all the outputs and my only Matrox unit. It seemed to work well, but the OS got confused sometimes and everyone who I asked about it (tech. people on site) recommended against it. That's why I'm asking here!

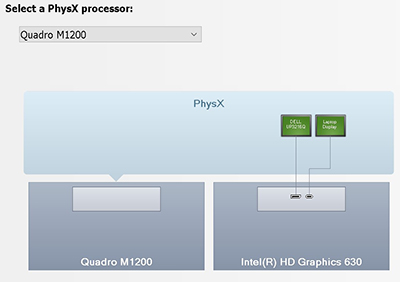

I'm having a terrible time with my laptop, on the other hand. I have the default set to the GPU for everything, but no matter how I run external projectors and things, they seem to go through the integrated Intel graphics, only using the nVidia GPU to assist. See the image below.

GPU 0(Intel) gets jammed at the top and everything freezes. This happens even when the output is going through the thunderbolt port, which I thought was supposed to bypass the integrated graphics completely!

It's particularly bad on Zoom type calls, especially when I want to share a screen. Basically everything freezes. I've tried so many "fixes", without success.

Cheers,

Hugh

-

Hi there @CitizenJoe,

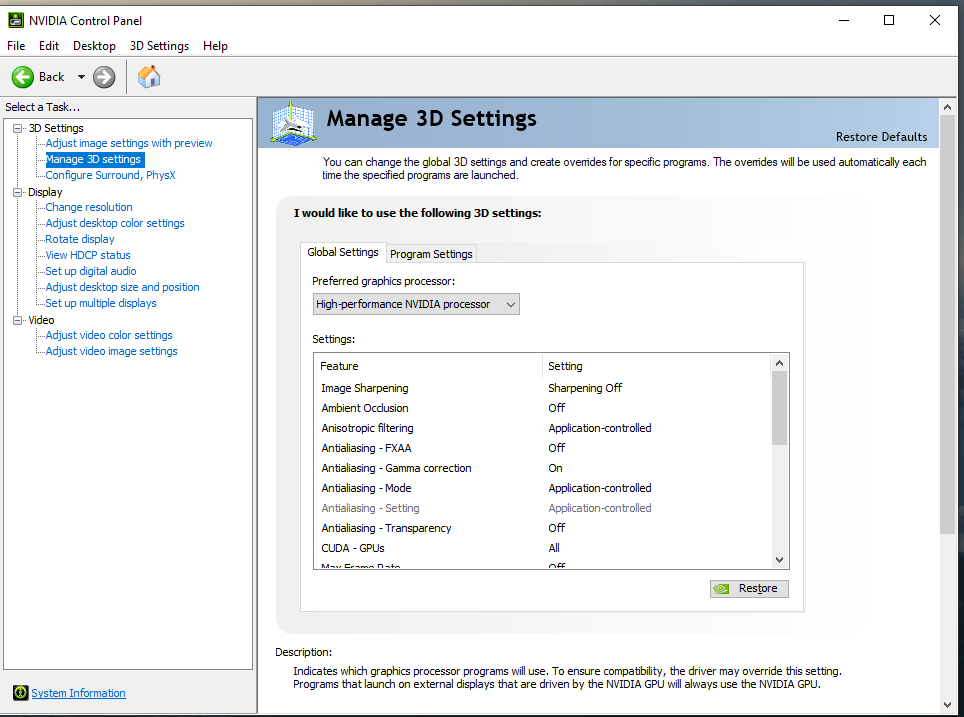

The screen where you set your Nvidia GPU to be used is actually Nvidia Physics Stimulation product known as PhysX, this is not the part of the GPU that is responsible for the Display output ! To set this go to your desktop > Right mouse button > Nvidia Control Panel (or look up how you can force this using the BIOS).

In the Nvidia Control panel you go to Manage 3D settings and force the programs to use the Dedicated GPU and override any other settings in Program Settings if you have those setup. Normally you can also right click a program on your Desktop and tell Windows to use the dedicated graphics card.

-

@juriaan Yes, I know. I've done that!! "I have the default set to the GPU for everything..." Sorry if it was unclear.

Hugh -

Are you able to disable the integrated Intel GPU in the bios? On my particular Dell laptop I can’t but I believe it’s possible on some models.If you could, would it help?

-

Just saw this.... might be helpful too:

https://hexus.net/tech/news/so... -

I wish I could say for sure what the video flow in your system is. Unfortunately it seems that this is one aspect of Windows machines that is upto the manufacturer, and isn't very standardized.

When you look at 'Setup multiple displays' in the nVidia control panel, are your displays shown under your nVidia gpu?

In my case, my Laptop Screen is NOT listed in the 'Setup multiple displays' section of the control panel (it is only configurable via the Intel video control panel).I do find it a little strange that your external connections are shown as part of the Intel HD card.

Mine are shown within the nVidia card/block (in the PhysX section).My testing hasn't shown any difference in performance between running Isadora on my internal Laptop Display or a External Display (Intel managed display VS nVidia managed display) assuming Isadora is setup to use the Dedicated GPU (otherwise things are unstable).

It seems that some video transfer is synced between the 2 gpus at all times.

@Fred , some work is being done in profiling and optimizing gpu usage on Windows.. so I am hopeful we will be seeing some improvements in the 'near' future :)