Motion Tracking through Isadora

-

Hi everyone,

Currently I am working on a project that will hopefully combine motion tracking with particle effects for a dance concert. The hope is that dancers will be able to move and the background particles will interact with the body. Obviously this is a multistep process, however right now I am stuck on the actual motion tracking section. I have researched a variety of ways to motion track and so far the best option seems to be to use a Kinect with NIMate to track the dancers. However, I am concerned that the Kinect doesn't have enough range to actually allow for tracking on a stage. Does anyone have an experience using a Kinect for motion tracking, and if so, what is the range like for these purposes. My current research says only 10 ft which feels very impractical. Thanks for any help you can give me, -

A big topic.

You are right that the Kinect sensor is limited in its reach. We have to accept that it was only ever designed for living room/bed room use. There are small pockets of people around the world using multiple kinects to track large spaces but this is complicated and I can't really point you to an links or suggestions.Other options are blob detection. Which Isadora can do using a normal camera/usb web camera or similar, but this also has its drawbacks. You can read more about this method on my blog: https://vjskulpture.wordpress.com/tag/motion-tracking/ -

xb360 Manages 15ft X 15ft in my kitchen. I can tie two together, front of stage and rear of stage, or left wing and right wing to get a decent reliable coverage of about 25ft x 15ft. It's not as complicated as you might think. Multi layer Blob detection on Kinect's depth image can be done pre-Isadora in the Kinect middleware if using the right middleware for the job (@skulpture - will be dropping a multiblob patch in the Dropbox folder from the Physics Engine thread this evening hopefully - needs refinement)... I'm more n' more coming to conclusion that for typical uses in this community, NI Mate isn't the right tool for what most seem to be wanting to do. Skeletons aren't particularly useful... It's blob detection and depth layers that make the most sense and are the most practical. Skeleton comes in handy for detail but more generally - blobs rock and give you way more options. This is all blob detection using dynamically-generated PNGs as the source, screen-capped in realtime. http://youtu.be/3CF7qfa3MFo I'll be adding in a depth & multiblob separated Kinect feed to it tonight/tomorrow.

-

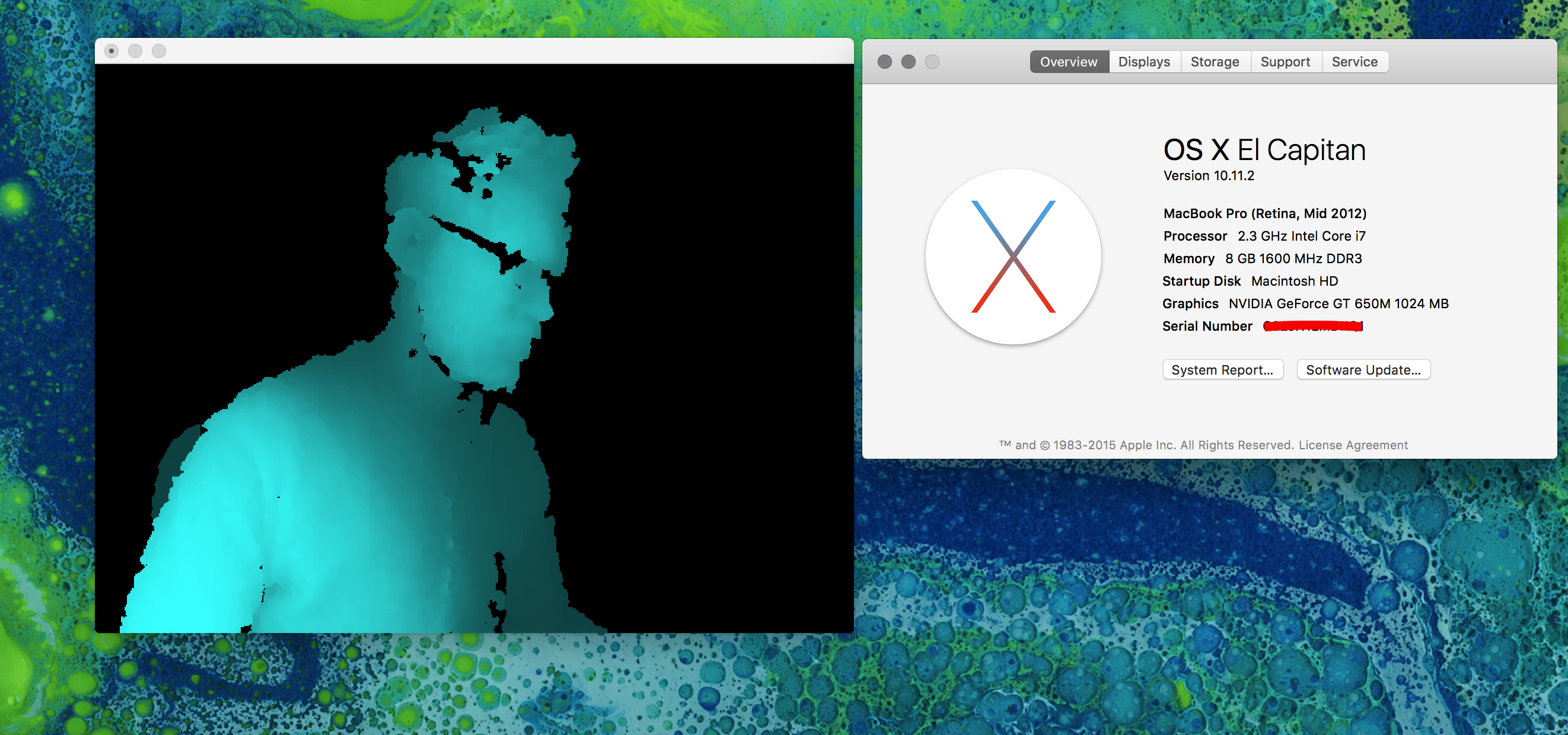

I've been working a lot on these questions for a project for a theatre play lately.I'm joining @Marci in the fact that the skeleton detection doesn't have to be the best solution: it requires a setup phase which is quite painful in the middle of a performance (but if you want to go that way I would recommend having a look at synapse).I've been using a processing sketch with OpenKinect lib to get the depth image of the kinect and send it to Isadora through Syphon, where I process the blob detection. It might be not the most efficient solution, but it's working easily and good enough for me. I manage to have more than 7 meters wide image. But the further you go the more unstable is the result...I'd be curious to know which solution you go for in the end!@Marci Your particle video is really nice, I'd be curious to see how you do that as well :) -

Been in bed ill since Friday evening so not had chance to tweak it up for sharing, but as soon as it's done I'll be posting the Processing src & Izzy patches to the Dropbox share I linked to above... :)

-

NB: This is Mac OSX Only!Grab Processing 2 from here: [Mac OS X](http://download.processing.org/processing-2.2.1-macosx.zip)(If you already have Processing 3 installed, just rename it in your apps folder to Processing 3\. Processing 2 is more stable for Kinect & Syphon purposes)Open Processing 2, head to Sketch > Import Library > Add Library.Find and install...- Syphon

- oscP5

- Open Kinect for Processing

You'll also need the flob library... download libraries.zip (attached) and extract and drop into your processing folder.Now, plug in your Kinect, download and extract Playlist_Sketch.zip, and open the file Playlist_Sketch.pde in Processing 2, and hit the Play button top left, and click on the output window.You'll start up on KinectFabric. Hold an arm out in front of you. Press 'D' to see the depth image, use up and down arrows to move the depth threshold until your hand is red. Press 'D' again to turn off the depth image and play with the curtain.Now, press 8\. You'll flip to Particles. Press 'D' again to reveal the depth image. Sit steady with a hand outstretched, and particles should be generated and flock to it. Move it around and the flock will follow. Stand up and move around slowly... the flock will flock to your entire blob.Click onscreen with the mouse and the particles are released to roam free. Click again and they'll head back to your blob silhouette. Press 'D' again to turn off depth image and play away! Again, up & down keys adjust depth threshold.Press 7\. Now you're viewing the particle text sketch. Either sit and wait, or click onscreen with your mouse to initiate the first particle burst. From then on it's timed. If you look at the source code (07_particlestext tab), and scroll all the way to the bottom, you'll find an array of words. It just runs through those in a loop, randomising position... have a look at the code for how these are rendered as images then scanned pixel-by-pixel...There are other interactive sketches on keys 0 thru 8.q & a tilt your Kinect up & down.g toggles Gravity on and off for the Curtain (init sketch / key 5)t toggles from grid to chains for the Curtain (init sketch / key 5)On the 6 key you'll find the multi blob sketch.On the 4 key is a triangulated blob visualiser.On the 3 key, a hand activated torch (if this causes a crash, make a folder called ImageTorch in your processing folder, and download the contents of the same folder from yonder: https://www.dropbox.com/home/Sensadora/Processing%202.x and stick it in there. Then it should work)On the 2 key is a mouse activated sketch. Click and drag.On the 1 key is another mouse activate sketch... just drag over the particles.On the 0 key is a particle-based streamer sketch which should follow your hand/blob around the screen also.https://youtu.be/O5cKPxaaufkHave a play! Look at the source. Much is commented. Majority are sketches from OpenProcessing.org - I've just tweaked them to work in this context / add Kinect / Syphon / OSC / embed them into a single controllable sketch.Scroll to the bottom of the first tab and look for the if(key== statements to see what keys do what.Recommend you listen for OSC streams in Isadora and fire up a Syphon listener as quite a lot gets output for you to integrate depending which sketch you're using.PS: This is a work in progress... by no means complete. -

Thanks a lot for that @Marci ! I'm gonna have a serious look on it tomorrow!

Glad you're not sick anymore! :) -

If you look at how sketch 7 works, it creates a PGraphic element with a white background, and renders the text on that rather than displaying on screen. We then iterate over the x and y checking each pixel, looking for a black one (or possibly red - can't remember what state I left the patch in). Wherever a matching pixel is found, a particle is added to an array with the x/y position - the particle is then released from 0,-100, with acceleration and velocity, and is attracted towards it's pre-destined x,y co-ords... it overshoots and spirals back towards losing velocity as it goes until it flocks around it's spot.

Exactly the same principle can be used on the raw depth image from a kinect (sketch 8). Whilst going over x and y, we check the raw depth of that pixel, and if it falls between a defined range (our minimum and maximum depth thresholds) we color it red. Using the same technique as for the particle text, we add a particle to an array for any matching red, and launch them from 0,-100.The trick is keeping the particles in check, so when we're dealing with large blobs such as in scene 8, we have a skip variable, so we skip and only look at every 3rd pixel. Lowering the resolution essentially. Otherwise you'll find CPU gets stressed and up spin all your fans. We give pixels a lifespan, and once their counter (in frames) has reached 0, they're marked to be removed the next time they exit the boundaries of the screen. Again, helps keep the load in check.There are all sorts of optimisations that could be done. These two demonstrate depth layer tracking to emulate motion tracking in a not-really-efficient way.Patch 6 is the multi blob sketch that I need to work with next.At the moment this is all limited to using a single Kinect feed (i.e.: just the depth feed), mainly because I'm on a Retina Macbook with USB3\. The USB library that is used in SimpleOpenNI and OpenKinect has issues with isochronous packets and tends to crash out if you try using more than one feed (e.g.: to do a coloured particle cloud, textured from the RGB camera image, you'd need both RGB and Depth feeds - on a USB3 macbook this is likely to crash out rapidly). This is why a lot of other Kinect middleware out there can be flakey for some users (indeed, many of them are actually processing sketches compiled out as a standalone executable)...If you have an older Macbook WITHOUT USB3 (non-retina MBPro 17" with AMD GPU for instance) - then the sketches above could all have the RGB feed enabled and particles textured from that... or we could pull in the user feed and handle skeleton alongside blob detection etc.A decent powered USB2 hub may get round the issues for retina MBPro users, but I haven't got one so can't confirm that. -

Thank you Marci. It's going to take some time for me get to speed on this but I and thankful for the path you've provided.

-

@Marci thanks for all this, it's going to take me a while to go through these sketches...

But It's very close to something I want to do and I feel like it's a really good hint for me.I didn't know for that USB3 issue since i'm (now gladly) owning a old MBP with USB2thanks again! -

Hi Marci.

Thank you for sharing your tutorial with code examples. It's so informative and is something I've been trying to work out for a while. I'm having some stability issues and sometimes get errors running Processing that sometimes results in a crash. I wonder if it could do with versions.I'm curious as to which Kinect sensor you are using. Do you think that could have anything to do with it? Mine is the first generation.Thanks again! I appreciate it. -

Me? Kinect for xBox360... 1417 model (x2). The only issue with these is if running over USB3 and using multiple feeds as noted above.

-

@ Marci

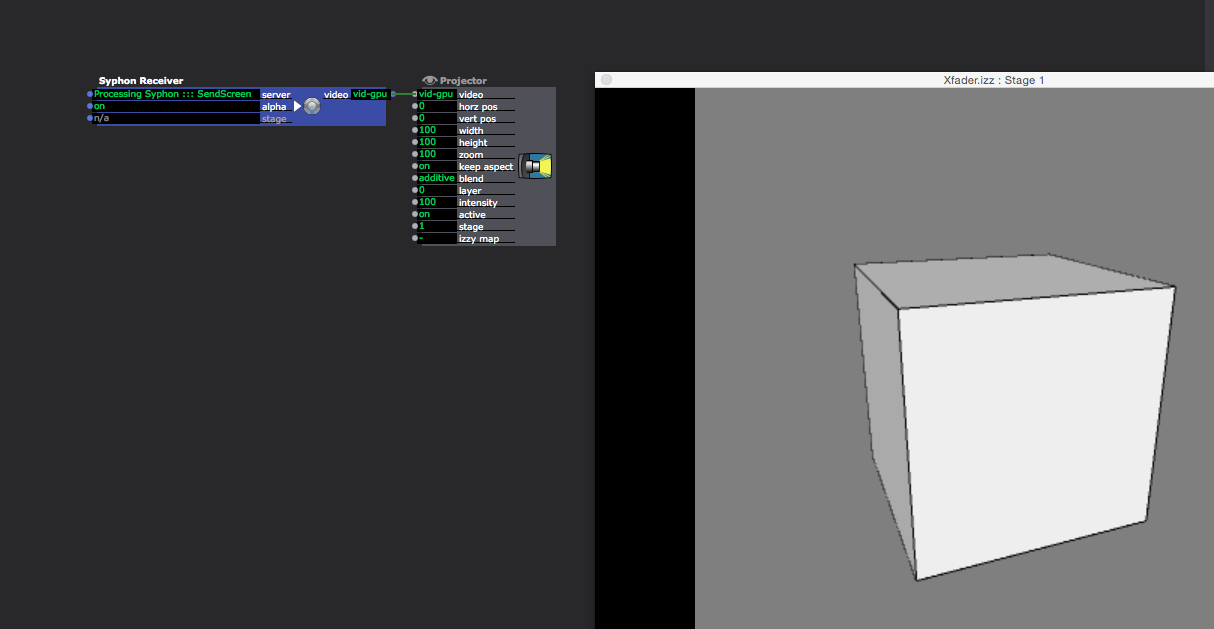

finally got it working but i can't get it into isadora :( Processing does not appear in the syphon receiver. -

@ skulpture

finally getting to motion tracking/ kinect... ect. i looked into your tutorials today. I even downloaded Synapse but all i get is a Black Preview Image. Could it be that is it not working in "Yosemite"?Or is it the MaxPatch that is missing (also in your tutorial)thanks for any further Informationp. -

Hi. Yes this is an old tutorial I'm afraid. I will have another look into it for you as soon as I can.

-

"finally got it working but i can't get it into isadora :( Processing does not appear in the syphon receiver."

Hit CMD-SHIFT-O in processing and it should give you the Examples browser... Expand Contributed Libraries, find the Syphon library's examples, run them and check they show up in Isadora. -

-

-

@skulpture yes after moving ( more jumping) in front of the kinect it woke up after all. i even managed to get the skeleton working, but getting to my laptop ( meaning closer to the sensor) it really freaked out :( so i might end up using nimate...

-

Anything based on SimpleOpenNI (including NIMate) takes an age to initialise skeleton properly unless it can see full height (feet to head) in shot. When you get too close, frequently skeleton legs start to go whappy - skeleton doesn't like it when it can't work out where a limb is, and just makes something up, which often freaks out whatever the Kinect is driving... One of many reasons I avoid using skeleton and prefer depth blobs. Otherwise, you have to add your own checking routines to remove limb markers between your middleware and output, or disable whatever that limb is driving when the coordinates exceed screen / stage / world boundaries or just vanish.