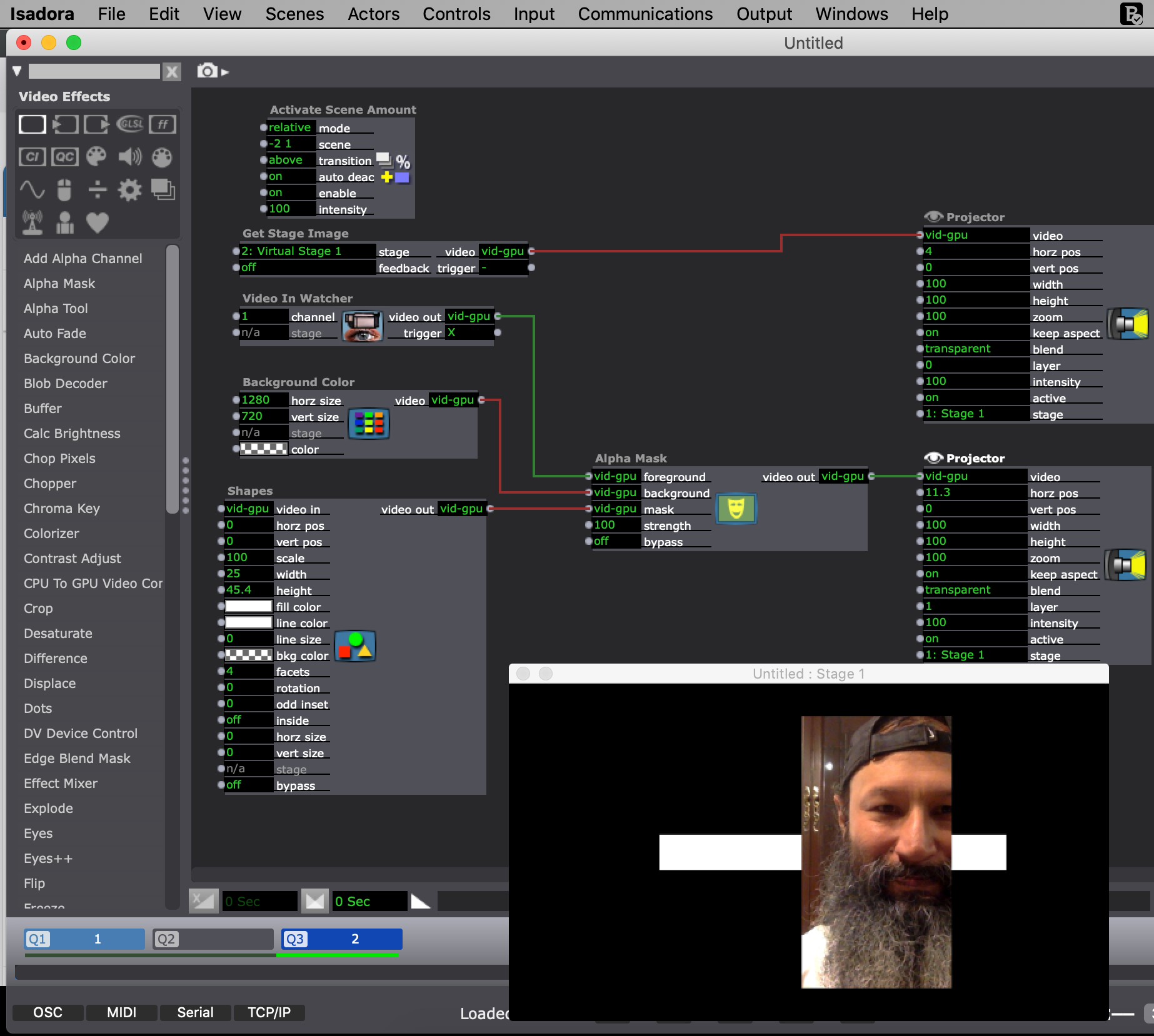

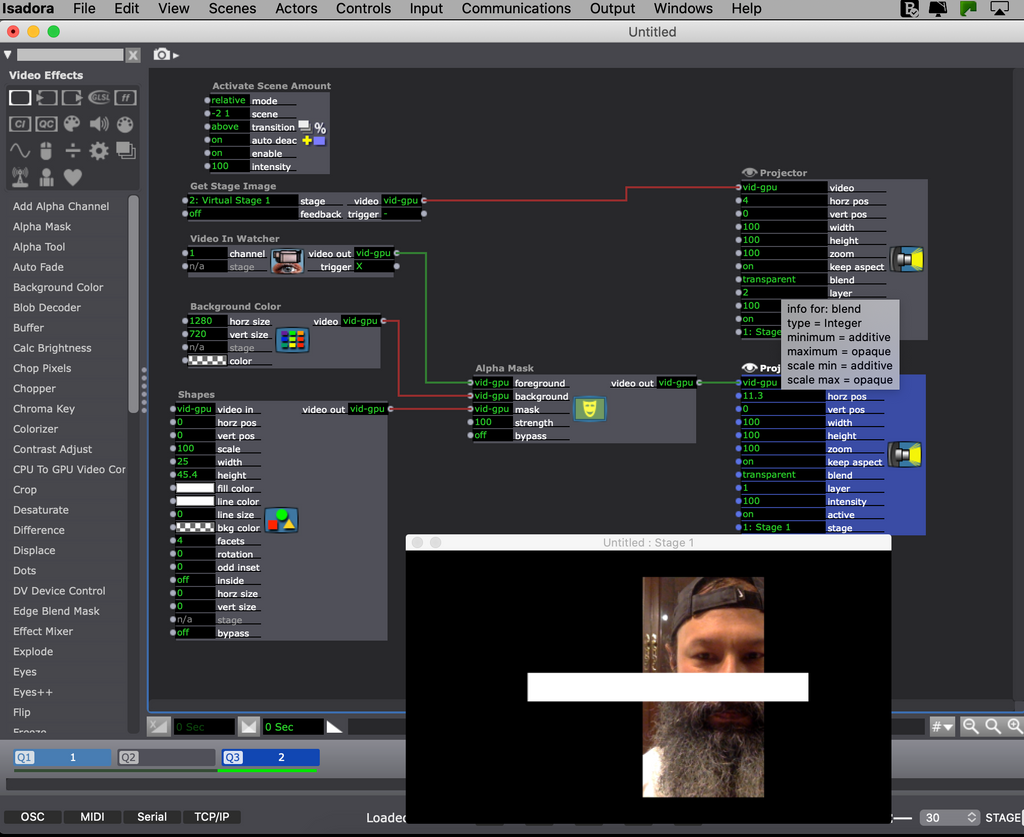

Hi! You can send the video to a virtual stage in the secondary scene you want to activate. In the active main scene, you can use the "Get Stage Image" actor, and this way you can send the video to a Projector Actor using layers. This way, alpha transparency is preserved, and you can arrange the layers as you like.

Best wishes!

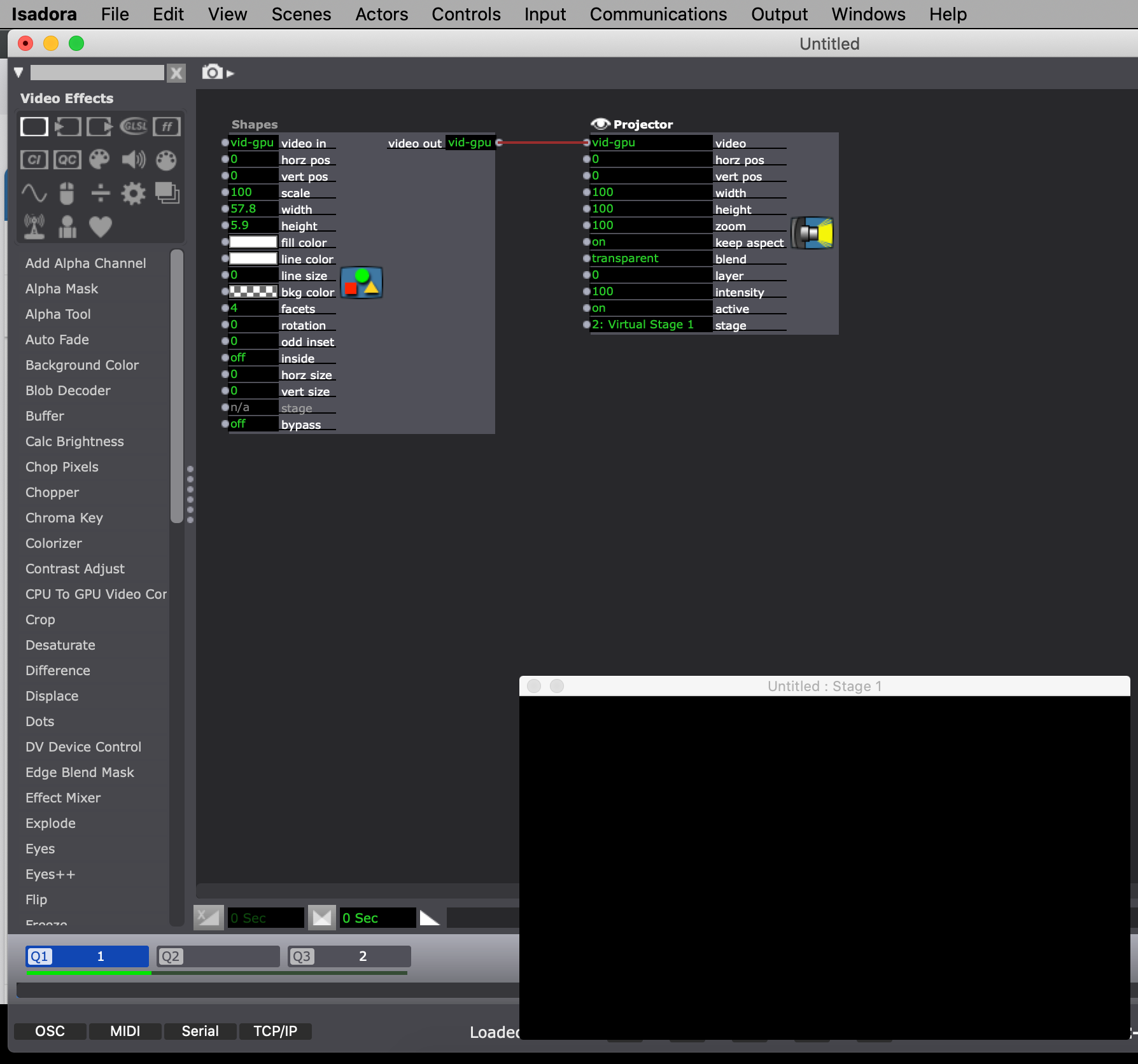

I'm trying to have an activated scene be underneath the content in the current scene, instead of being additive or above.

The only options under activate scene are "additive" and "above". I would really love to be able to activate a scene, and then have the new cue content be above. (example, I have a background video happening (activated scene) and then I have a ball rolling on screen in the next scene, and other content in the next scene, where the background video still rolls). There doesn't seem to be a way to do this without the layers being additive. My request is that in the activate scene section, an option could be "below".

I'm trying to avoid too many internal scene triggers, so that each cue is its own scene. Is there an alternative for this?

@michel Thanks Michel for the quick and helpful reply ~ will try.

Hi Ray

Are you using the one graphics card to run the three monitors and six projectors .?

If using two graphics cards, that might be the issue???.

Can you give us a bit more information on your set up too?

Eamon

How are you outputting 8 projectors (and how many monitors)? I'm sure that must have something to do with it.

I'm sure that @dusx knows more about this than I do!

Cheers,

Hugh

@jjhp3

Dear John, it’s quite possible that the Micca player is the source of the issue. A good way to test this is to play the H.264 file exported from Final Cut directly on your computer using VLC. If it looks correct there, then the Micca G2 is very likely the culprit.

If it doesn’t look right in VLC, try playing the original ProRes version (before it went through Final Cut). If that one looks correct, then the problem is introduced during the H.264 encoding step.

Izzy will start with 6 projectors but crashes as soon as I add another one. Weird.

Hi All

Running into a problem with a new video installation which requires accurate mapping. Using Isadora into a video projector, I carefully map the output. That video is output through the Syphon actor (set to same resolution and ProRez codec). Then I import into FCP so I can repeat the video clip until it's an hour or so long and export as H264. That goes on my Micca G2 (set for HD output) allowing the video can play constantly during the exhibit. The problem is that video size is not the same. A bit smaller and generally a little wanky in aspect ratio. Not a lot, but many adjustments necessary with projector position. Is the path to the finished video at fault, or is it the Micca? I've used this system before several times but without the need for inch accuracy. Thanks for any insight, John

Did that first thing, no luck. Tried re-installing, no luck. Unplugged all the projectors (but kept all 3 monitors connected) and it started. Now I'll try it with all 8 projectors.

Thanks for your help

Ray